在docker中运行tensorflow的第一步就是要找到自己需要的镜像,我们可以去 docker hub找到自己需要的tensorflow镜像.tensorflow的镜像主要分两类,一种是在CPU上面跑的,还有一种是在GPU上面跑的,如果需要GPU的,那么还需要安装nvidia-docker.这里我使用的是CPU版本的.当然我们还需要选择具体的tensorflow版本.这里我拉取的命令如下(项目中用到的是/tensorflow:1.9.0):

docker pull tensorflow/tensorflow:1.9.0-devel-py3

拉取成功之后,运行docker images可以看到有tensorflow镜像.

tensorflow在docker中使用

docker run -it -p 8888:8888 --name tf-1.9 tensorflow/tensorflow:1.9.0-devel-py3

运行上面的命令,在容器中启动镜像.-p表示指定端口映射,即将本机的8888端口映射到容器的8888端口.--name用来指定容器的名字为tf-1.9.因为这里采用的镜像是devel模式的,所以默认不启动jupyter.如果想使用默认启动jupyter的镜像,那么直接拉取不带devel的镜像就可以.即拉取最近的镜像docker pull tensorflow/tensorflow

启动之后,我们就进入了容器,ls / 查看容器根目录内容,可以看到有run_jupyter.sh文件.运行此文件,即在根目录下执行./run_jupyter.sh --allow-root,--allow-root参数是因为jupyter启动不推荐使用root,这里是主动允许使用root.然后在浏览器中就可以访问jupyter的内容了.

上面仅仅是跑了一个什么都没有的镜像,如果我们需要在镜像里面跑我们的深度学习程序怎么办呢?这首先做的第一步就是要制作我们自己的镜像.这里我们跑一个简单的mnist数据集,程序可以直接去tensorflow上面找一个例子程序.这里我的程序如下:

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

"""Simple, end-to-end, LeNet-5-like convolutional MNIST model example.

This should achieve a test error of 0.7%. Please keep this model as simple and

linear as possible, it is meant as a tutorial for simple convolutional models.

Run with --self_test on the command line to execute a short self-test.

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import argparse

import gzip

import os

import sys

import time

import logging

import numpy

from six.moves import urllib

from six.moves import xrange # pylint: disable=redefined-builtin

import tensorflow as tf

# CVDF mirror of http://yann.lecun.com/exdb/mnist/

# 如果WORK_DIRECTORY中没有需要的数据,则从此地址下载数据

SOURCE_URL = 'https://storage.googleapis.com/cvdf-datasets/mnist/'

# 训练数据位置

# WORK_DIRECTORY = 'data'

WORK_DIRECTORY = './MNIST-data'

IMAGE_SIZE = 28

NUM_CHANNELS = 1

PIXEL_DEPTH = 255

NUM_LABELS = 10

VALIDATION_SIZE = 5000 # Size of the validation set.

SEED = 66478 # Set to None for random seed.

BATCH_SIZE = 64

NUM_EPOCHS = 10

EVAL_BATCH_SIZE = 64

EVAL_FREQUENCY = 100 # Number of steps between evaluations.

FLAGS = None

# 打印信息设置

# logging.basicConfig(format='%(asctime)s - %(pathname)s[line:%(lineno)d] - %(levelname)s: %(message)s',

# level=logging.DEBUG)

logging.basicConfig(level=logging.DEBUG, # 控制台打印的日志级别

filename='cnn_mnist.log',

filemode='a', # 模式,有w和a,w就是写模式,每次都会重新写日志,覆盖之前的日志

# a是追加模式,默认如果不写的话,就是追加模式

format=

'%(asctime)s - %(pathname)s[line:%(lineno)d] - %(levelname)s: %(message)s'

# 日志格式

)

def data_type():

"""Return the type of the activations, weights, and placeholder variables."""

if FLAGS.use_fp16:

return tf.float16

else:

return tf.float32

def maybe_download(filename):

"""Download the data from Yann's website, unless it's already here."""

if not tf.gfile.Exists(WORK_DIRECTORY):

tf.gfile.MakeDirs(WORK_DIRECTORY)

filepath = os.path.join(WORK_DIRECTORY, filename)

if not tf.gfile.Exists(filepath):

filepath, _ = urllib.request.urlretrieve(SOURCE_URL + filename, filepath)

with tf.gfile.GFile(filepath) as f:

size = f.size()

print('Successfully downloaded', filename, size, 'bytes.')

return filepath

def extract_data(filename, num_images):

"""Extract the images into a 4D tensor [image index, y, x, channels].

Values are rescaled from [0, 255] down to [-0.5, 0.5].

"""

logging.info('Extracting' + filename)

print('Extracting', filename)

with gzip.open(filename) as bytestream:

bytestream.read(16)

buf = bytestream.read(IMAGE_SIZE * IMAGE_SIZE * num_images * NUM_CHANNELS)

data = numpy.frombuffer(buf, dtype=numpy.uint8).astype(numpy.float32)

data = (data - (PIXEL_DEPTH / 2.0)) / PIXEL_DEPTH

data = data.reshape(num_images, IMAGE_SIZE, IMAGE_SIZE, NUM_CHANNELS)

return data

def extract_labels(filename, num_images):

"""Extract the labels into a vector of int64 label IDs."""

logging.info('Extracting' + filename)

print('Extracting', filename)

with gzip.open(filename) as bytestream:

bytestream.read(8)

buf = bytestream.read(1 * num_images)

labels = numpy.frombuffer(buf, dtype=numpy.uint8).astype(numpy.int64)

return labels

def fake_data(num_images):

"""Generate a fake dataset that matches the dimensions of MNIST."""

data = numpy.ndarray(

shape=(num_images, IMAGE_SIZE, IMAGE_SIZE, NUM_CHANNELS),

dtype=numpy.float32)

labels = numpy.zeros(shape=(num_images,), dtype=numpy.int64)

for image in xrange(num_images):

label = image % 2

data[image, :, :, 0] = label - 0.5

labels[image] = label

return data, labels

def error_rate(predictions, labels):

"""Return the error rate based on dense predictions and sparse labels."""

return 100.0 - (

100.0 *

numpy.sum(numpy.argmax(predictions, 1) == labels) /

predictions.shape[0])

def main(_):

if FLAGS.self_test:

logging.info('Running self-test.')

print('Running self-test.')

train_data, train_labels = fake_data(256)

validation_data, validation_labels = fake_data(EVAL_BATCH_SIZE)

test_data, test_labels = fake_data(EVAL_BATCH_SIZE)

num_epochs = 1

else:

# Get the data.

train_data_filename = maybe_download('train-images-idx3-ubyte.gz')

train_labels_filename = maybe_download('train-labels-idx1-ubyte.gz')

test_data_filename = maybe_download('t10k-images-idx3-ubyte.gz')

test_labels_filename = maybe_download('t10k-labels-idx1-ubyte.gz')

# Extract it into numpy arrays.

train_data = extract_data(train_data_filename, 60000)

train_labels = extract_labels(train_labels_filename, 60000)

test_data = extract_data(test_data_filename, 10000)

test_labels = extract_labels(test_labels_filename, 10000)

# Generate a validation set.

validation_data = train_data[:VALIDATION_SIZE, ...]

validation_labels = train_labels[:VALIDATION_SIZE]

train_data = train_data[VALIDATION_SIZE:, ...]

train_labels = train_labels[VALIDATION_SIZE:]

num_epochs = NUM_EPOCHS

train_size = train_labels.shape[0]

# This is where training samples and labels are fed to the graph.

# These placeholder nodes will be fed a batch of training data at each

# training step using the {feed_dict} argument to the Run() call below.

train_data_node = tf.placeholder(

data_type(),

shape=(BATCH_SIZE, IMAGE_SIZE, IMAGE_SIZE, NUM_CHANNELS))

train_labels_node = tf.placeholder(tf.int64, shape=(BATCH_SIZE,))

eval_data = tf.placeholder(

data_type(),

shape=(EVAL_BATCH_SIZE, IMAGE_SIZE, IMAGE_SIZE, NUM_CHANNELS))

# The variables below hold all the trainable weights. They are passed an

# initial value which will be assigned when we call:

# {tf.global_variables_initializer().run()}

conv1_weights = tf.Variable(

tf.truncated_normal([5, 5, NUM_CHANNELS, 32], # 5x5 filter, depth 32.

stddev=0.1,

seed=SEED, dtype=data_type()))

conv1_biases = tf.Variable(tf.zeros([32], dtype=data_type()))

conv2_weights = tf.Variable(tf.truncated_normal(

[5, 5, 32, 64], stddev=0.1,

seed=SEED, dtype=data_type()))

conv2_biases = tf.Variable(tf.constant(0.1, shape=[64], dtype=data_type()))

fc1_weights = tf.Variable( # fully connected, depth 512.

tf.truncated_normal([IMAGE_SIZE // 4 * IMAGE_SIZE // 4 * 64, 512],

stddev=0.1,

seed=SEED,

dtype=data_type()))

fc1_biases = tf.Variable(tf.constant(0.1, shape=[512], dtype=data_type()))

fc2_weights = tf.Variable(tf.truncated_normal([512, NUM_LABELS],

stddev=0.1,

seed=SEED,

dtype=data_type()))

fc2_biases = tf.Variable(tf.constant(

0.1, shape=[NUM_LABELS], dtype=data_type()))

# We will replicate the model structure for the training subgraph, as well

# as the evaluation subgraphs, while sharing the trainable parameters.

def model(data, train=False):

"""The Model definition."""

# 2D convolution, with 'SAME' padding (i.e. the output feature map has

# the same size as the input). Note that {strides} is a 4D array whose

# shape matches the data layout: [image index, y, x, depth].

conv = tf.nn.conv2d(data,

conv1_weights,

strides=[1, 1, 1, 1],

padding='SAME')

# Bias and rectified linear non-linearity.

relu = tf.nn.relu(tf.nn.bias_add(conv, conv1_biases))

# Max pooling. The kernel size spec {ksize} also follows the layout of

# the data. Here we have a pooling window of 2, and a stride of 2.

pool = tf.nn.max_pool(relu,

ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1],

padding='SAME')

conv = tf.nn.conv2d(pool,

conv2_weights,

strides=[1, 1, 1, 1],

padding='SAME')

relu = tf.nn.relu(tf.nn.bias_add(conv, conv2_biases))

pool = tf.nn.max_pool(relu,

ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1],

padding='SAME')

# Reshape the feature map cuboid into a 2D matrix to feed it to the

# fully connected layers.

pool_shape = pool.get_shape().as_list()

reshape = tf.reshape(

pool,

[pool_shape[0], pool_shape[1] * pool_shape[2] * pool_shape[3]])

# Fully connected layer. Note that the '+' operation automatically

# broadcasts the biases.

hidden = tf.nn.relu(tf.matmul(reshape, fc1_weights) + fc1_biases)

# Add a 50% dropout during training only. Dropout also scales

# activations such that no rescaling is needed at evaluation time.

if train:

hidden = tf.nn.dropout(hidden, 0.5, seed=SEED)

return tf.matmul(hidden, fc2_weights) + fc2_biases

# Training computation: logits + cross-entropy loss.

logits = model(train_data_node, True)

loss = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(

labels=train_labels_node, logits=logits))

# L2 regularization for the fully connected parameters.

regularizers = (tf.nn.l2_loss(fc1_weights) + tf.nn.l2_loss(fc1_biases) +

tf.nn.l2_loss(fc2_weights) + tf.nn.l2_loss(fc2_biases))

# Add the regularization term to the loss.

loss += 5e-4 * regularizers

# Optimizer: set up a variable that's incremented once per batch and

# controls the learning rate decay.

batch = tf.Variable(0, dtype=data_type())

# Decay once per epoch, using an exponential schedule starting at 0.01.

learning_rate = tf.train.exponential_decay(

0.01, # Base learning rate.

batch * BATCH_SIZE, # Current index into the dataset.

train_size, # Decay step.

0.95, # Decay rate.

staircase=True)

# Use simple momentum for the optimization.

optimizer = tf.train.MomentumOptimizer(learning_rate,

0.9).minimize(loss,

global_step=batch)

# Predictions for the current training minibatch.

train_prediction = tf.nn.softmax(logits)

# Predictions for the test and validation, which we'll compute less often.

eval_prediction = tf.nn.softmax(model(eval_data))

# Small utility function to evaluate a dataset by feeding batches of data to

# {eval_data} and pulling the results from {eval_predictions}.

# Saves memory and enables this to run on smaller GPUs.

def eval_in_batches(data, sess):

"""Get all predictions for a dataset by running it in small batches."""

size = data.shape[0]

if size < EVAL_BATCH_SIZE:

logging.error("batch size for evals larger than dataset: %d" % size)

raise ValueError("batch size for evals larger than dataset: %d" % size)

predictions = numpy.ndarray(shape=(size, NUM_LABELS), dtype=numpy.float32)

for begin in xrange(0, size, EVAL_BATCH_SIZE):

end = begin + EVAL_BATCH_SIZE

if end <= size:

predictions[begin:end, :] = sess.run(

eval_prediction,

feed_dict={eval_data: data[begin:end, ...]})

else:

batch_predictions = sess.run(

eval_prediction,

feed_dict={eval_data: data[-EVAL_BATCH_SIZE:, ...]})

predictions[begin:, :] = batch_predictions[begin - size:, :]

return predictions

# Create a local session to run the training.

start_time = time.time()

with tf.Session() as sess:

# Run all the initializers to prepare the trainable parameters.

tf.global_variables_initializer().run()

logging.info('Initialized!')

print('Initialized!')

# Loop through training steps.

for step in xrange(int(num_epochs * train_size) // BATCH_SIZE):

# Compute the offset of the current minibatch in the data.

# Note that we could use better randomization across epochs.

offset = (step * BATCH_SIZE) % (train_size - BATCH_SIZE)

batch_data = train_data[offset:(offset + BATCH_SIZE), ...]

batch_labels = train_labels[offset:(offset + BATCH_SIZE)]

# This dictionary maps the batch data (as a numpy array) to the

# node in the graph it should be fed to.

feed_dict = {train_data_node: batch_data,

train_labels_node: batch_labels}

# Run the optimizer to update weights.

sess.run(optimizer, feed_dict=feed_dict)

# print some extra information once reach the evaluation frequency

if step % EVAL_FREQUENCY == 0:

# fetch some extra nodes' data

l, lr, predictions = sess.run([loss, learning_rate, train_prediction],

feed_dict=feed_dict)

elapsed_time = time.time() - start_time

start_time = time.time()

logging.info('Step %d (epoch %.2f), %.1f ms' %(step, float(step) * BATCH_SIZE / train_size, 1000 * elapsed_time / EVAL_FREQUENCY))

print('Step %d (epoch %.2f), %.1f ms' %

(step, float(step) * BATCH_SIZE / train_size,

1000 * elapsed_time / EVAL_FREQUENCY))

logging.info('Minibatch loss: %.3f, learning rate: %.6f' % (l, lr))

print('Minibatch loss: %.3f, learning rate: %.6f' % (l, lr))

logging.info('Minibatch error: %.1f%%' % error_rate(predictions, batch_labels))

print('Minibatch error: %.1f%%' % error_rate(predictions, batch_labels))

logging.info('Validation error: %.1f%%' % error_rate(eval_in_batches(validation_data, sess), validation_labels))

print('Validation error: %.1f%%' % error_rate(

eval_in_batches(validation_data, sess), validation_labels))

sys.stdout.flush()

# Finally print the result!

test_error = error_rate(eval_in_batches(test_data, sess), test_labels)

logging.info('Test error: %.1f%%' % test_error)

print('Test error: %.1f%%' % test_error)

if FLAGS.self_test:

logging.info('test_error' + test_error)

print('test_error', test_error)

assert test_error == 0.0, 'expected 0.0 test_error, got %.2f' % (

test_error,)

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument(

'--use_fp16',

default=False,

help='Use half floats instead of full floats if True.',

action='store_true')

parser.add_argument(

'--self_test',

default=False,

action='store_true',

help='True if running a self test.')

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

这里我在原来的程序基础上面稍微改了下,因为我已经提前将数据下载好了,所以我让程序直接读取本机指定目录下的训练数据,同时增加了日志文件输出.这是为了在公司的容器云平台上测试获取容器输出文件

我们可以在我们的用户目录下,创建一个空的文件夹,将mnist数据集以及程序文件都拷贝进这个文件夹下.其实数据集应该是放在数据卷中,但是这里为了方便,我直接将训练数据打进了镜像中.然后创建Dockerfile,文件内容如下

FROM tensorflow/tensorflow:1.9.0-devel-py3

COPY . /home/ll

WORKDIR /home/ll

CMD ['python', 'convolutional.py']

即Dockerfile文件中最后一行表示容器启动的运行的命令(注:在实际运行过程中,出现了一些python依赖包的缺失,可以将最后一个指令改为CMD ["sh","-c","apt-get update && apt-get install python3-tk && python ssd_notebook_result.py"],启动容器后先下载依赖,再执行程序)

docker build -t tf:1.9 .

-t参数指定镜像跟tag,最后的.指定了镜像中的上下文.构建完之后使用docker images可以查看多了tf:1.9镜像

运行下面的命令,运行上一步构建好的镜像

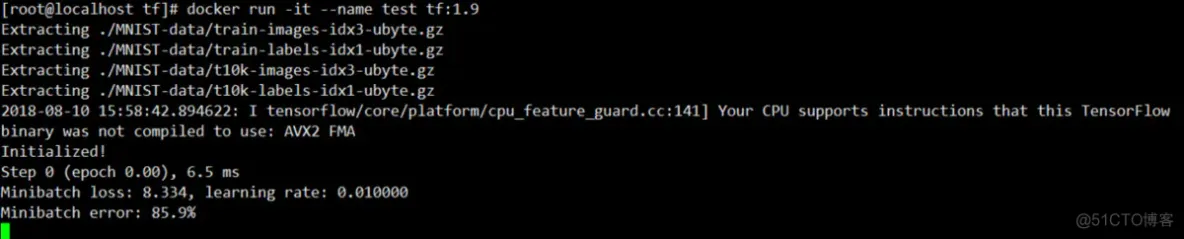

docker run -it --name test tf:1.9

然后就能够看到训练的输出.

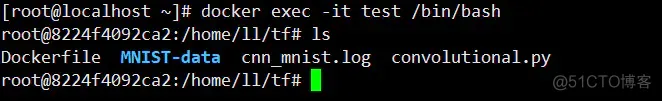

同时可以在看一个连接,进入容器,即运行下面命令

docker exec -it test /bin/bash

可以看到如下内容

即看到了cnn_mnist.log的日志输出文件

实际操作中并非一帆风顺,当我在实际操作过程中运行一个类似上述的容器过程中,多次报错,最后排查是缺失python的一些依赖导致,可能是由于版本原因,有些依赖在此版本中并未安装,此处我想到的解决方案是:拉取初始镜像,后台运行对应容器,并进入容器,在容器中下载缺失的依赖,下载完成后停止容器,再将此时的容器封装成镜像,在重复上面的镜像构建步骤,具体如下

###通过容器创建镜像

-通过基础镜像启动容器

docker run -it --name tf-1.9 tensorflow/tensorflow:1.9.0-devel-py3 /bin/bash

-在容器中下载依赖

apt-get update

apt-get install python3-tk

-将容器打包成新的镜像

docker commit -a "chengh97" -m "tensorflow:1.9.0-devel-py3 patch version"

c982c62b168d tensorflow/tensorflow:1.9.0-devel-py3-patch

-编写Dockerfile

我们可以在我们的用户目录下,创建一个空的文件夹,将mnist数据集以及程序文件都拷贝进这个文件夹下.其实数据集应该是放在数据卷中,但是这里为了方便,我直接将训练数据打进了镜像中.然后创建Dockerfile,文件内容如下

FROM tensorflow/tensorflow:1.9.0-devel-py3-patch

COPY . /home/ll

WORKDIR /home/ll

CMD ['python', 'convolutional.py']

即Dockerfile文件中最后一行表示容器启动的运行的命令(注:在实际运行过程中,出现了一些python依赖包的缺失,可以将最后一个指令改为CMD ["sh","-c","apt-get update && apt-get install python3-tk && python ssd_notebook_result.py"],启动容器后先下载依赖,再执行程序)

-build镜像

docker build -t tf:1.9 .

-t参数指定镜像跟tag,最后的.指定了镜像中的上下文.构建完之后使用docker images可以查看多了tf:1.9镜像

-运行镜像

运行下面的命令,运行上一步构建好的镜像

docker run -it --name test tf:1.9

免责声明:本文系网络转载或改编,未找到原创作者,版权归原作者所有。如涉及版权,请联系删

武汉格发信息技术有限公司,格发许可优化管理系统可以帮你评估贵公司软件许可的真实需求,再低成本合规性管理软件许可,帮助贵司提高软件投资回报率,为软件采购、使用提供科学决策依据。支持的软件有: CAD,CAE,PDM,PLM,Catia,Ugnx, AutoCAD, Pro/E, Solidworks 等。