软件

产品

yolov5源码地址:https://github.com/ultralytics/yolov5 文末有完整代码

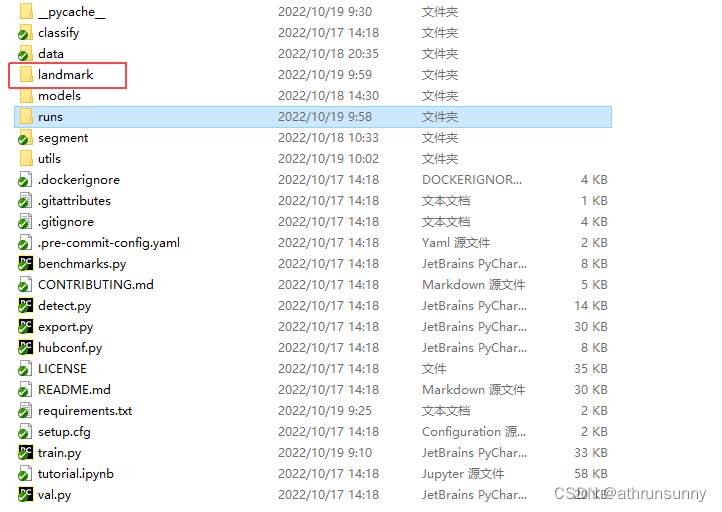

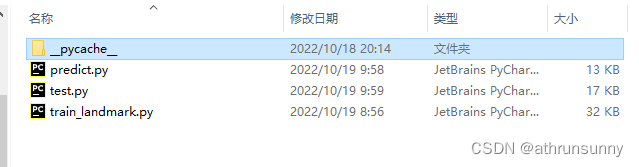

克隆下最新的yolov5,在根目录下创建landmark文件夹

文件夹中创建三个文件(predict, test ,train_landmark),类似yolov5的分类和语义分割任务

没有提及修改的都是直接添加就行

landmark/train_landmark.py

import argparseimport loggingimport mathimport osimport sysimport randomimport timefrom pathlib import Pathfrom threading import Threadfrom warnings import warn import numpy as npimport torch.distributed as distimport torch.nn as nnimport torch.nn.functional as Fimport torch.optim as optimimport torch.optim.lr_scheduler as lr_schedulerimport torch.utils.dataimport yamlfrom torch.cuda import ampfrom torch.nn.parallel import DistributedDataParallel as DDPfrom torch.utils.tensorboard import SummaryWriterfrom tqdm import tqdm FILE = Path(__file__).resolve()ROOT = FILE.parents[1] # YOLOv5 root directoryif str(ROOT) not in sys.path: sys.path.append(str(ROOT)) # add ROOT to PATHROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative import landmark.test as test # import test.py to get mAP after each epochfrom models.experimental import attempt_loadfrom models.yolo import Modelfrom utils.callbacks import Callbacksfrom utils.autoanchor import check_anchors# from utils.facedataloaders import create_dataloaderfrom utils.landmark.dataloaders import create_dataloaderfrom utils.general import labels_to_class_weights, increment_path, labels_to_image_weights, init_seeds, \ fitness, strip_optimizer, get_latest_run, check_dataset, check_file, check_git_status, check_img_size, \ print_mutation, set_logging, intersect_dicts, check_suffix, check_yaml,colorstr,LOGGERfrom utils.downloads import attempt_download,is_urlfrom utils.landmark.loss import compute_lossfrom utils.landmark.plots import plot_labelsf, plot_resultsf, plot_evolutionfrom utils.plots import plot_imagesfrom utils.torch_utils import ModelEMA, select_device, torch_distributed_zero_first logger = logging.getLogger(__name__) try: import wandbexcept ImportError: wandb = None # logger.info("Install Weights & Biases for experiment logging via 'pip install wandb' (recommended)") def train(hyp, opt, device, tb_writer=None, wandb=None): logger.info(f'Hyperparameters {hyp}') save_dir, epochs, batch_size, total_batch_size, weights, rank = \ Path(opt.save_dir), opt.epochs, opt.batch_size, opt.total_batch_size, opt.weights, opt.global_rank # Directories wdir = save_dir / 'weights' wdir.mkdir(parents=True, exist_ok=True) # make dir last = wdir / 'last.pt' best = wdir / 'best.pt' results_file = save_dir / 'results.txt' # Save run settings with open(save_dir / 'hyp.yaml', 'w') as f: yaml.dump(hyp, f, sort_keys=False) with open(save_dir / 'opt.yaml', 'w') as f: yaml.dump(vars(opt), f, sort_keys=False) # Configure plots = not opt.evolve # create plots cuda = device.type != 'cpu' init_seeds(2 + rank) with open(opt.data) as f: data_dict = yaml.load(f, Loader=yaml.FullLoader) # data dict with torch_distributed_zero_first(rank): check_dataset(data_dict) # check train_path = data_dict['train'] test_path = data_dict['val'] nc = 1 if opt.single_cls else int(data_dict['nc']) # number of classes names = ['item'] if opt.single_cls and len(data_dict['names']) != 1 else data_dict['names'] # class names assert len(names) == nc, '%g names found for nc=%g dataset in %s' % (len(names), nc, opt.data) # check # Model check_suffix(weights, '.pt') # check weights pretrained = weights.endswith('.pt') if pretrained: with torch_distributed_zero_first(rank): attempt_download(weights) # download if not found locally ckpt = torch.load(weights, map_location=device) # load checkpoint if hyp.get('anchors'): ckpt['model'].yaml['anchors'] = round(hyp['anchors']) # force autoanchor model = Model(opt.cfg or ckpt['model'].yaml, ch=3, nc=nc).to(device) # create exclude = ['anchor'] if opt.cfg or hyp.get('anchors') else [] # exclude keys state_dict = ckpt['model'].float().state_dict() # to FP32 state_dict = intersect_dicts(state_dict, model.state_dict(), exclude=exclude) # intersect model.load_state_dict(state_dict, strict=False) # load logger.info('Transferred %g/%g items from %s' % (len(state_dict), len(model.state_dict()), weights)) # report else: model = Model(opt.cfg, ch=3, nc=nc).to(device) # create # Freeze freeze = [] # parameter names to freeze (full or partial) for k, v in model.named_parameters(): v.requires_grad = True # train all layers if any(x in k for x in freeze): print('freezing %s' % k) v.requires_grad = False # Optimizer nbs = 64 # nominal batch size accumulate = max(round(nbs / total_batch_size), 1) # accumulate loss before optimizing hyp['weight_decay'] *= total_batch_size * accumulate / nbs # scale weight_decay pg0, pg1, pg2 = [], [], [] # optimizer parameter groups for k, v in model.named_modules(): if hasattr(v, 'bias') and isinstance(v.bias, nn.Parameter): pg2.append(v.bias) # biases if isinstance(v, nn.BatchNorm2d): pg0.append(v.weight) # no decay elif hasattr(v, 'weight') and isinstance(v.weight, nn.Parameter): pg1.append(v.weight) # apply decay if opt.adam: optimizer = optim.Adam(pg0, lr=hyp['lr0'], betas=(hyp['momentum'], 0.999)) # adjust beta1 to momentum else: optimizer = optim.SGD(pg0, lr=hyp['lr0'], momentum=hyp['momentum'], nesterov=True) optimizer.add_param_group({'params': pg1, 'weight_decay': hyp['weight_decay']}) # add pg1 with weight_decay optimizer.add_param_group({'params': pg2}) # add pg2 (biases) logger.info('Optimizer groups: %g .bias, %g conv.weight, %g other' % (len(pg2), len(pg1), len(pg0))) del pg0, pg1, pg2 # Scheduler https://arxiv.org/pdf/1812.01187.pdf # https://pytorch.org/docs/stable/_modules/torch/optim/lr_scheduler.html#OneCycleLR lf = lambda x: ((1 + math.cos(x * math.pi / epochs)) / 2) * (1 - hyp['lrf']) + hyp['lrf'] # cosine scheduler = lr_scheduler.LambdaLR(optimizer, lr_lambda=lf) # plot_lr_scheduler(optimizer, scheduler, epochs) # Logging if wandb and wandb.run is None: opt.hyp = hyp # add hyperparameters wandb_run = wandb.init(config=opt, resume="allow", project='YOLOv5' if opt.project == 'runs/train' else Path(opt.project).stem, name=save_dir.stem, id=ckpt.get('wandb_id') if 'ckpt' in locals() else None) loggers = {'wandb': wandb} # loggers dict # Resume start_epoch, best_fitness = 0, 0.0 if pretrained: # Optimizer if ckpt['optimizer'] is not None: optimizer.load_state_dict(ckpt['optimizer']) best_fitness = ckpt['best_fitness'] # Results if ckpt.get('training_results') is not None: with open(results_file, 'w') as file: file.write(ckpt['training_results']) # write results.txt # Epochs # start_epoch = ckpt['epoch'] + 1 if opt.resume: assert start_epoch > 0, '%s training to %g epochs is finished, nothing to resume.' % (weights, epochs) if epochs < start_epoch: logger.info('%s has been trained for %g epochs. Fine-tuning for %g additional epochs.' % (weights, ckpt['epoch'], epochs)) epochs += ckpt['epoch'] # finetune additional epochs del ckpt, state_dict # Image sizes gs = int(max(model.stride)) # grid size (max stride) imgsz, imgsz_test = [check_img_size(x, gs) for x in opt.img_size] # verify imgsz are gs-multiples # DP mode if cuda and rank == -1 and torch.cuda.device_count() > 1: model = torch.nn.DataParallel(model) # SyncBatchNorm if opt.sync_bn and cuda and rank != -1: model = torch.nn.SyncBatchNorm.convert_sync_batchnorm(model).to(device) logger.info('Using SyncBatchNorm()') # EMA ema = ModelEMA(model) if rank in [-1, 0] else None # DDP mode if cuda and rank != -1: model = DDP(model, device_ids=[opt.local_rank], output_device=opt.local_rank) # Trainloader dataloader, dataset = create_dataloader(train_path, imgsz, batch_size, gs, opt, hyp=hyp, augment=True, cache=opt.cache_images, rect=opt.rect, rank=rank, world_size=opt.world_size, workers=opt.workers, image_weights=opt.image_weights,prefix=colorstr('train: ')) mlc = np.concatenate(dataset.labels, 0)[:, 0].max() # max label class nb = len(dataloader) # number of batches assert mlc < nc, 'Label class %g exceeds nc=%g in %s. Possible class labels are 0-%g' % (mlc, nc, opt.data, nc - 1) # Process 0 if rank in [-1, 0]: ema.updates = start_epoch * nb // accumulate # set EMA updates testloader = create_dataloader(test_path, imgsz_test, total_batch_size, gs, opt, # testloader hyp=hyp, cache=opt.cache_images and not opt.notest, rect=True, rank=-1, world_size=opt.world_size, workers=opt.workers, pad=0.5,prefix=colorstr('val: '))[0] if not opt.resume: labels = np.concatenate(dataset.labels, 0) c = torch.tensor(labels[:, 0]) # classes # cf = torch.bincount(c.long(), minlength=nc) + 1. # frequency # model._initialize_biases(cf.to(device)) if plots: plot_labelsf(labels, save_dir, loggers) if tb_writer: tb_writer.add_histogram('classes', c, 0) # Anchors if not opt.noautoanchor: check_anchors(dataset, model=model, thr=hyp['anchor_t'], imgsz=imgsz) # Model parameters hyp['cls'] *= nc / 80. # scale coco-tuned hyp['cls'] to current dataset model.nc = nc # attach number of classes to model model.hyp = hyp # attach hyperparameters to model model.gr = 1.0 # iou loss ratio (obj_loss = 1.0 or iou) model.class_weights = labels_to_class_weights(dataset.labels, nc).to(device) * nc # attach class weights model.names = names # Start training t0 = time.time() nw = max(round(hyp['warmup_epochs'] * nb), 1000) # number of warmup iterations, max(3 epochs, 1k iterations) # nw = min(nw, (epochs - start_epoch) / 2 * nb) # limit warmup to < 1/2 of training maps = np.zeros(nc) # mAP per class results = (0, 0, 0, 0, 0, 0, 0) # P, R, mAP@.5, mAP@.5-.95, val_loss(box, obj, cls) scheduler.last_epoch = start_epoch - 1 # do not move scaler = amp.GradScaler(enabled=cuda) logger.info('Image sizes %g train, %g test\n' 'Using %g dataloader workers\nLogging results to %s\n' 'Starting training for %g epochs...' % (imgsz, imgsz_test, dataloader.num_workers, save_dir, epochs)) for epoch in range(start_epoch, epochs): # epoch ------------------------------------------------------------------ model.train() # Update image weights (optional) if opt.image_weights: # Generate indices if rank in [-1, 0]: cw = model.class_weights.cpu().numpy() * (1 - maps) ** 2 / nc # class weights iw = labels_to_image_weights(dataset.labels, nc=nc, class_weights=cw) # image weights dataset.indices = random.choices(range(dataset.n), weights=iw, k=dataset.n) # rand weighted idx # Broadcast if DDP if rank != -1: indices = (torch.tensor(dataset.indices) if rank == 0 else torch.zeros(dataset.n)).int() dist.broadcast(indices, 0) if rank != 0: dataset.indices = indices.cpu().numpy() # Update mosaic border # b = int(random.uniform(0.25 * imgsz, 0.75 * imgsz + gs) // gs * gs) # dataset.mosaic_border = [b - imgsz, -b] # height, width borders mloss = torch.zeros(5, device=device) # mean losses if rank != -1: dataloader.sampler.set_epoch(epoch) pbar = enumerate(dataloader) logger.info( ('\n' + '%10s' * 9) % ('Epoch', 'gpu_mem', 'box', 'obj', 'cls', 'landmark', 'total', 'targets', 'img_size')) if rank in [-1, 0]: pbar = tqdm(pbar, total=nb) # progress bar optimizer.zero_grad() for i, (imgs, targets, paths, _) in pbar: # batch ------------------------------------------------------------- ni = i + nb * epoch # number integrated batches (since train start) imgs = imgs.to(device, non_blocking=True).float() / 255.0 # uint8 to float32, 0-255 to 0.0-1.0 # Warmup if ni <= nw: xi = [0, nw] # x interp # model.gr = np.interp(ni, xi, [0.0, 1.0]) # iou loss ratio (obj_loss = 1.0 or iou) accumulate = max(1, np.interp(ni, xi, [1, nbs / total_batch_size]).round()) for j, x in enumerate(optimizer.param_groups): # bias lr falls from 0.1 to lr0, all other lrs rise from 0.0 to lr0 x['lr'] = np.interp(ni, xi, [hyp['warmup_bias_lr'] if j == 2 else 0.0, x['initial_lr'] * lf(epoch)]) if 'momentum' in x: x['momentum'] = np.interp(ni, xi, [hyp['warmup_momentum'], hyp['momentum']]) # Multi-scale if opt.multi_scale: sz = random.randrange(imgsz * 0.5, imgsz * 1.5 + gs) // gs * gs # size sf = sz / max(imgs.shape[2:]) # scale factor if sf != 1: ns = [math.ceil(x * sf / gs) * gs for x in imgs.shape[2:]] # new shape (stretched to gs-multiple) imgs = F.interpolate(imgs, size=ns, mode='bilinear', align_corners=False) # Forward with amp.autocast(enabled=cuda): pred = model(imgs) # forward loss, loss_items = compute_loss(pred, targets.to(device), model) # loss scaled by batch_size if rank != -1: loss *= opt.world_size # gradient averaged between devices in DDP mode # Backward scaler.scale(loss).backward() # Optimize if ni % accumulate == 0: scaler.step(optimizer) # optimizer.step scaler.update() optimizer.zero_grad() if ema: ema.update(model) # Print if rank in [-1, 0]: mloss = (mloss * i + loss_items) / (i + 1) # update mean losses mem = '%.3gG' % (torch.cuda.memory_reserved() / 1E9 if torch.cuda.is_available() else 0) # (GB) s = ('%10s' * 2 + '%10.4g' * 7) % ( '%g/%g' % (epoch, epochs - 1), mem, *mloss, targets.shape[0], imgs.shape[-1]) pbar.set_description(s) # Plot if plots and ni < 3: f = save_dir / f'train_batch{ni}.jpg' # filename Thread(target=plot_images, args=(imgs, targets, paths, f), daemon=True).start() # if tb_writer: # tb_writer.add_image(f, result, dataformats='HWC', global_step=epoch) # tb_writer.add_graph(model, imgs) # add model to tensorboard elif plots and ni == 3 and wandb: wandb.log({"Mosaics": [wandb.Image(str(x), caption=x.name) for x in save_dir.glob('train*.jpg')]}) # end batch ------------------------------------------------------------------------------------------------ # end epoch ---------------------------------------------------------------------------------------------------- # Scheduler lr = [x['lr'] for x in optimizer.param_groups] # for tensorboard scheduler.step() # DDP process 0 or single-GPU if rank in [-1, 0] and epoch >= 0: # mAP if ema: ema.update_attr(model, include=['yaml', 'nc', 'hyp', 'gr', 'names', 'stride', 'class_weights']) final_epoch = epoch + 1 == epochs if not opt.notest or final_epoch: # Calculate mAP results, maps, times = test.test(opt.data, batch_size=total_batch_size, imgsz=imgsz_test, model=ema.ema, single_cls=opt.single_cls, dataloader=testloader, save_dir=save_dir, plots=False, log_imgs=opt.log_imgs if wandb else 0) # Write with open(results_file, 'a') as f: f.write(s + '%10.4g' * 7 % results + '\n') # P, R, mAP@.5, mAP@.5-.95, val_loss(box, obj, cls) if len(opt.name) and opt.bucket: os.system('gsutil cp %s gs://%s/results/results%s.txt' % (results_file, opt.bucket, opt.name)) # Log tags = ['train/box_loss', 'train/obj_loss', 'train/cls_loss', # train loss 'metrics/precision', 'metrics/recall', 'metrics/mAP_0.5', 'metrics/mAP_0.5:0.95', 'val/box_loss', 'val/obj_loss', 'val/cls_loss', # val loss 'x/lr0', 'x/lr1', 'x/lr2'] # params for x, tag in zip(list(mloss[:-1]) + list(results) + lr, tags): if tb_writer: tb_writer.add_scalar(tag, x, epoch) # tensorboard if wandb: wandb.log({tag: x}) # W&B # Update best mAP fi = fitness(np.array(results).reshape(1, -1)) # weighted combination of [P, R, mAP@.5, mAP@.5-.95] if fi > best_fitness: best_fitness = fi # Save model save = (not opt.nosave) or (final_epoch and not opt.evolve) if save: with open(results_file, 'r') as f: # create checkpoint ckpt = {'epoch': epoch, 'best_fitness': best_fitness, 'training_results': f.read(), 'model': ema.ema, 'optimizer': None if final_epoch else optimizer.state_dict(), 'wandb_id': wandb_run.id if wandb else None} # Save last, best and delete torch.save(ckpt, last) if best_fitness == fi: torch.save(ckpt, best) del ckpt # end epoch ---------------------------------------------------------------------------------------------------- # end training if rank in [-1, 0]: # Strip optimizers final = best if best.exists() else last # final model for f in [last, best]: if f.exists(): strip_optimizer(f) # strip optimizers if opt.bucket: os.system(f'gsutil cp {final} gs://{opt.bucket}/weights') # upload # Plots if plots: plot_resultsf(save_dir=save_dir) # save as results.png if wandb: files = ['results.png', 'precision_recall_curve.png', 'confusion_matrix.png'] wandb.log({"Results": [wandb.Image(str(save_dir / f), caption=f) for f in files if (save_dir / f).exists()]}) if opt.log_artifacts: wandb.log_artifact(artifact_or_path=str(final), type='model', name=save_dir.stem) # Test best.pt logger.info('%g epochs completed in %.3f hours.\n' % (epoch - start_epoch + 1, (time.time() - t0) / 3600)) for f in last, best: if f.exists(): strip_optimizer(f) # strip optimizers if f is best: LOGGER.info(f'\nValidating {f}...') results, _, _ = test.test( opt.data, batch_size=total_batch_size, imgsz=imgsz_test, conf_thres=0.001, iou_thres=0.65, model=attempt_load(final, device), single_cls=opt.single_cls, dataloader=testloader, save_dir=save_dir, save_json=False, plots=True) # val best model with plots else: dist.destroy_process_group() wandb.run.finish() if wandb and wandb.run else None torch.cuda.empty_cache() return results def parse_opt(known=False): parser = argparse.ArgumentParser() parser.add_argument('--weights', type=str, default=ROOT / 'yolov5n-lmk.pt', help='initial weights path') parser.add_argument('--cfg', type=str, default=ROOT / 'models/landmark/yolov5n-landmark.yaml',help='model.yaml path') parser.add_argument('--data', type=str, default=ROOT / 'data/widerface.yaml', help='data.yaml path') parser.add_argument('--hyp', type=str, default=ROOT / 'data/hyps/hyp.scratch.yaml', help='hyperparameters path') parser.add_argument('--epochs', type=int, default=300) parser.add_argument('--batch-size', type=int, default=16, help='total batch size for all GPUs') parser.add_argument('--img-size', nargs='+', type=int, default=[800, 800], help='[train, test] image sizes') parser.add_argument('--rect', action='store_true', help='rectangular training') parser.add_argument('--resume', nargs='?', const=True, default=False, help='resume most recent training') parser.add_argument('--nosave', action='store_true', help='only save final checkpoint') parser.add_argument('--notest', action='store_true', help='only test final epoch') parser.add_argument('--noautoanchor', action='store_true', help='disable autoanchor check') parser.add_argument('--evolve', type=int, nargs='?', const=300, help='evolve hyperparameters for x generations') parser.add_argument('--bucket', type=str, default='', help='gsutil bucket') parser.add_argument('--cache-images', action='store_true', help='cache images for faster training') parser.add_argument('--image-weights', action='store_true', help='use weighted image selection for training') parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu') parser.add_argument('--multi-scale', action='store_true', default=False, help='vary img-size +/- 50%%') parser.add_argument('--single-cls', action='store_true', help='train multi-class data as single-class') parser.add_argument('--adam', action='store_true', help='use torch.optim.Adam() optimizer') parser.add_argument('--sync-bn', action='store_true', help='use SyncBatchNorm, only available in DDP mode') parser.add_argument('--local_rank', type=int, default=-1, help='DDP parameter, do not modify') parser.add_argument('--log-imgs', type=int, default=16, help='number of images for W&B logging, max 100') parser.add_argument('--log-artifacts', action='store_true', help='log artifacts, i.e. final trained model') parser.add_argument('--workers', type=int, default=4, help='maximum number of dataloader workers') parser.add_argument('--project', default=ROOT / 'runs/train-lmk', help='save to project/name') parser.add_argument('--name', default='exp', help='save to project/name') parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment') return parser.parse_known_args()[0] if known else parser.parse_args() def main(opt, callbacks=Callbacks()): # Set DDP variables opt.total_batch_size = opt.batch_size opt.world_size = int(os.environ['WORLD_SIZE']) if 'WORLD_SIZE' in os.environ else 1 opt.global_rank = int(os.environ['RANK']) if 'RANK' in os.environ else -1 set_logging(str(opt.global_rank)) if opt.global_rank in [-1, 0]: check_git_status() # Resume if opt.resume and not opt.evolve: # resume from specified or most recent last.pt last = Path(check_file(opt.resume) if isinstance(opt.resume, str) else get_latest_run()) opt_yaml = last.parent.parent / 'opt.yaml' # train options yaml opt_data = opt.data # original dataset if opt_yaml.is_file(): with open(opt_yaml, errors='ignore') as f: d = yaml.safe_load(f) else: d = torch.load(last, map_location='cpu')['opt'] opt = argparse.Namespace(**d) # replace opt.cfg, opt.weights, opt.resume = '', str(last), True # reinstate if is_url(opt_data): opt.data = check_file(opt_data) # avoid HUB resume auth timeout else: opt.data, opt.cfg, opt.hyp, opt.weights, opt.project = \ check_file(opt.data), check_yaml(opt.cfg), check_yaml(opt.hyp), str(opt.weights), str(opt.project) # checks assert len(opt.cfg) or len(opt.weights), 'either --cfg or --weights must be specified' if opt.evolve: if opt.project == str(ROOT / 'runs/train'): # if default project name, rename to runs/evolve opt.project = str(ROOT / 'runs/evolve') opt.exist_ok, opt.resume = opt.resume, False # pass resume to exist_ok and disable resume if opt.name == 'cfg': opt.name = Path(opt.cfg).stem # use model.yaml as name opt.save_dir = str(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # DDP mode device = select_device(opt.device, batch_size=opt.batch_size) if opt.local_rank != -1: assert torch.cuda.device_count() > opt.local_rank torch.cuda.set_device(opt.local_rank) device = torch.device('cuda', opt.local_rank) dist.init_process_group(backend='nccl', init_method='env://') # distributed backend assert opt.batch_size % opt.world_size == 0, '--batch-size must be multiple of CUDA device count' opt.batch_size = opt.total_batch_size // opt.world_size # Hyperparameters with open(opt.hyp) as f: hyp = yaml.load(f, Loader=yaml.FullLoader) # load hyps if 'box' not in hyp: warn('Compatibility: %s missing "box" which was renamed from "giou" in %s' % (opt.hyp, 'https://github.com/ultralytics/yolov5/pull/1120')) hyp['box'] = hyp.pop('giou') # Train logger.info(opt) if not opt.evolve: tb_writer = None # init loggers if opt.global_rank in [-1, 0]: logger.info(f'Start Tensorboard with "tensorboard --logdir {opt.project}", view at http://localhost:6006/') tb_writer = SummaryWriter(opt.save_dir) # Tensorboard train(hyp, opt, device, tb_writer, wandb) # Evolve hyperparameters (optional) else: # Hyperparameter evolution metadata (mutation scale 0-1, lower_limit, upper_limit) meta = {'lr0': (1, 1e-5, 1e-1), # initial learning rate (SGD=1E-2, Adam=1E-3) 'lrf': (1, 0.01, 1.0), # final OneCycleLR learning rate (lr0 * lrf) 'momentum': (0.3, 0.6, 0.98), # SGD momentum/Adam beta1 'weight_decay': (1, 0.0, 0.001), # optimizer weight decay 'warmup_epochs': (1, 0.0, 5.0), # warmup epochs (fractions ok) 'warmup_momentum': (1, 0.0, 0.95), # warmup initial momentum 'warmup_bias_lr': (1, 0.0, 0.2), # warmup initial bias lr 'box': (1, 0.02, 0.2), # box loss gain 'cls': (1, 0.2, 4.0), # cls loss gain 'cls_pw': (1, 0.5, 2.0), # cls BCELoss positive_weight 'obj': (1, 0.2, 4.0), # obj loss gain (scale with pixels) 'obj_pw': (1, 0.5, 2.0), # obj BCELoss positive_weight 'iou_t': (0, 0.1, 0.7), # IoU training threshold 'anchor_t': (1, 2.0, 8.0), # anchor-multiple threshold 'anchors': (2, 2.0, 10.0), # anchors per output grid (0 to ignore) 'fl_gamma': (0, 0.0, 2.0), # focal loss gamma (efficientDet default gamma=1.5) 'hsv_h': (1, 0.0, 0.1), # image HSV-Hue augmentation (fraction) 'hsv_s': (1, 0.0, 0.9), # image HSV-Saturation augmentation (fraction) 'hsv_v': (1, 0.0, 0.9), # image HSV-Value augmentation (fraction) 'degrees': (1, 0.0, 45.0), # image rotation (+/- deg) 'translate': (1, 0.0, 0.9), # image translation (+/- fraction) 'scale': (1, 0.0, 0.9), # image scale (+/- gain) 'shear': (1, 0.0, 10.0), # image shear (+/- deg) 'perspective': (0, 0.0, 0.001), # image perspective (+/- fraction), range 0-0.001 'flipud': (1, 0.0, 1.0), # image flip up-down (probability) 'fliplr': (0, 0.0, 1.0), # image flip left-right (probability) 'mosaic': (1, 0.0, 1.0), # image mixup (probability) 'mixup': (1, 0.0, 1.0)} # image mixup (probability) assert opt.local_rank == -1, 'DDP mode not implemented for --evolve' opt.notest, opt.nosave = True, True # only test/save final epoch # ei = [isinstance(x, (int, float)) for x in hyp.values()] # evolvable indices yaml_file = Path(opt.save_dir) / 'hyp_evolved.yaml' # save best result here if opt.bucket: os.system('gsutil cp gs://%s/evolve.txt .' % opt.bucket) # download evolve.txt if exists for _ in range(opt.evolve): # generations to evolve if Path('evolve.txt').exists(): # if evolve.txt exists: select best hyps and mutate # Select parent(s) parent = 'single' # parent selection method: 'single' or 'weighted' x = np.loadtxt('evolve.txt', ndmin=2) n = min(5, len(x)) # number of previous results to consider x = x[np.argsort(-fitness(x))][:n] # top n mutations w = fitness(x) - fitness(x).min() + 1E-6 # weights if parent == 'single' or len(x) == 1: # x = x[random.randint(0, n - 1)] # random selection x = x[random.choices(range(n), weights=w)[0]] # weighted selection elif parent == 'weighted': x = (x * w.reshape(n, 1)).sum(0) / w.sum() # weighted combination # Mutate mp, s = 0.8, 0.2 # mutation probability, sigma npr = np.random npr.seed(int(time.time())) g = np.array([x[0] for x in meta.values()]) # gains 0-1 ng = len(meta) v = np.ones(ng) while all(v == 1): # mutate until a change occurs (prevent duplicates) v = (g * (npr.random(ng) < mp) * npr.randn(ng) * npr.random() * s + 1).clip(0.3, 3.0) for i, k in enumerate(hyp.keys()): # plt.hist(v.ravel(), 300) hyp[k] = float(x[i + 7] * v[i]) # mutate # Constrain to limits for k, v in meta.items(): hyp[k] = max(hyp[k], v[1]) # lower limit hyp[k] = min(hyp[k], v[2]) # upper limit hyp[k] = round(hyp[k], 5) # significant digits # Train mutation results = train(hyp.copy(), opt, device, wandb=wandb) # Write mutation results # keys = ('metrics/precision', 'metrics/recall', 'metrics/mAP_0.5', 'metrics/mAP_0.5:0.95', 'val/box_loss', # 'val/obj_loss', 'val/cls_loss') print_mutation(hyp.copy(), results, yaml_file, opt.bucket) # print_mutation(keys, results, hyp.copy(), save_dir, opt.bucket) # Plot results plot_evolution(yaml_file) print(f'Hyperparameter evolution complete. Best results saved as: {yaml_file}\n' f'Command to train a new model with these hyperparameters: $ python train.py --hyp {yaml_file}') def run(**kwargs): # Usage: import train; train.run(data='coco128.yaml', imgsz=320, weights='yolov5m.pt') opt = parse_opt(True) for k, v in kwargs.items(): setattr(opt, k, v) main(opt) return opt if __name__ == "__main__": opt = parse_opt() main(opt)landmark/test.py

import argparseimport jsonimport osimport sysfrom pathlib import Pathfrom threading import Thread import numpy as npimport torchimport yamlfrom tqdm import tqdm FILE = Path(__file__).resolve()ROOT = FILE.parents[1] # YOLOv5 root directoryif str(ROOT) not in sys.path: sys.path.append(str(ROOT)) # add ROOT to PATHROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative from models.experimental import attempt_loadfrom utils.landmark.dataloaders import create_dataloaderfrom utils.general import coco80_to_coco91_class, check_dataset, check_file, check_img_size, box_iou, \ scale_boxes, xyxy2xywh, xywh2xyxy, set_logging, increment_pathfrom utils.landmark.general import non_max_suppression_facefrom utils.landmark.loss import compute_lossfrom utils.metrics import ConfusionMatrixfrom utils.landmark.metrics import ap_per_classffrom utils.landmark.plots import plot_imagesf, output_to_targetf, plot_study_txtfrom utils.torch_utils import select_device, time_sync def test(data, weights=None, batch_size=32, imgsz=640, conf_thres=0.001, iou_thres=0.6, # for NMS save_json=False, single_cls=False, augment=False, verbose=False, model=None, dataloader=None, save_dir=Path(''), # for saving images save_txt=False, # for auto-labelling save_hybrid=False, # for hybrid auto-labelling save_conf=False, # save auto-label confidences plots=True, log_imgs=0): # number of logged images # Initialize/load model and set device training = model is not None if training: # called by train.py device = next(model.parameters()).device # get model device else: # called directly set_logging() device = select_device(opt.device, batch_size=batch_size) # Directories save_dir = Path(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # increment run (save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir # Load model model = attempt_load(weights) # load FP32 model imgsz = check_img_size(imgsz, s=model.stride.max()) # check img_size # Multi-GPU disabled, incompatible with .half() https://github.com/ultralytics/yolov5/issues/99 # if device.type != 'cpu' and torch.cuda.device_count() > 1: # model = nn.DataParallel(model) # Half half = device.type != 'cpu' # half precision only supported on CUDA if half: model.half() # Configure model.eval() is_coco = data.endswith('coco.yaml') # is COCO dataset with open(data) as f: data = yaml.load(f, Loader=yaml.FullLoader) # model dict check_dataset(data) # check nc = 1 if single_cls else int(data['nc']) # number of classes iouv = torch.linspace(0.5, 0.95, 10).to(device) # iou vector for mAP@0.5:0.95 niou = iouv.numel() # Logging log_imgs, wandb = min(log_imgs, 100), None # ceil try: import wandb # Weights & Biases except ImportError: log_imgs = 0 # Dataloader if not training: img = torch.zeros((1, 3, imgsz, imgsz), device=device) # init img _ = model(img.half() if half else img) if device.type != 'cpu' else None # run once path = data['test'] if opt.task == 'test' else data['val'] # path to val/test images dataloader = create_dataloader(path, imgsz, batch_size, model.stride.max(), opt, pad=0.5, rect=True)[0] seen = 0 confusion_matrix = ConfusionMatrix(nc=nc) names = {k: v for k, v in enumerate(model.names if hasattr(model, 'names') else model.module.names)} coco91class = coco80_to_coco91_class() s = ('%20s' + '%12s' * 6) % ('Class', 'Images', 'Targets', 'P', 'R', 'mAP@.5', 'mAP@.5:.95') p, r, f1, mp, mr, map50, map, t0, t1 = 0., 0., 0., 0., 0., 0., 0., 0., 0. loss = torch.zeros(3, device=device) jdict, stats, ap, ap_class, wandb_images = [], [], [], [], [] for batch_i, (img, targets, paths, shapes) in enumerate(tqdm(dataloader, desc=s)): img = img.to(device, non_blocking=True) img = img.half() if half else img.float() # uint8 to fp16/32 img /= 255.0 # 0 - 255 to 0.0 - 1.0 targets = targets.to(device) nb, _, height, width = img.shape # batch size, channels, height, width with torch.no_grad(): # Run model t = time_sync() inf_out, train_out = model(img, augment=augment) # inference and training outputs t0 += time_sync() - t # Compute loss if training: loss += compute_loss([x.float() for x in train_out], targets, model)[1][:3] # box, obj, cls # Run NMS targets[:, 2:6] *= torch.Tensor([width, height, width, height]).to(device) # to pixels lb = [targets[targets[:, 0] == i, 1:] for i in range(nb)] if save_hybrid else [] # for autolabelling t = time_sync() #output = non_max_suppression(inf_out, conf_thres=conf_thres, iou_thres=iou_thres, labels=lb) output = non_max_suppression_face(inf_out, conf_thres=conf_thres, iou_thres=iou_thres, labels=lb) t1 += time_sync() - t # Statistics per image for si, pred in enumerate(output): pred = torch.cat((pred[:, :5], pred[:, 15:]), 1) # throw landmark in thresh labels = targets[targets[:, 0] == si, 1:] nl = len(labels) tcls = labels[:, 0].tolist() if nl else [] # target class path = Path(paths[si]) seen += 1 if len(pred) == 0: if nl: stats.append((torch.zeros(0, niou, dtype=torch.bool), torch.Tensor(), torch.Tensor(), tcls)) if plots: confusion_matrix.process_batch(detections=None, labels=labels[:, 0]) continue # Predictions predn = pred.clone() scale_boxes(img[si].shape[1:], predn[:, :4], shapes[si][0], shapes[si][1]) # native-space pred # Append to text file if save_txt: gn = torch.tensor(shapes[si][0])[[1, 0, 1, 0]] # normalization gain whwh for *xyxy, conf, cls in predn.tolist(): xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh line = (cls, *xywh, conf) if save_conf else (cls, *xywh) # label format with open(save_dir / 'labels' / (path.stem + '.txt'), 'a') as f: f.write(('%g ' * len(line)).rstrip() % line + '\n') # W&B logging if plots and len(wandb_images) < log_imgs: box_data = [{"position": {"minX": xyxy[0], "minY": xyxy[1], "maxX": xyxy[2], "maxY": xyxy[3]}, "class_id": int(cls), "box_caption": "%s %.3f" % (names[cls], conf), "scores": {"class_score": conf}, "domain": "pixel"} for *xyxy, conf, cls in pred.tolist()] boxes = {"predictions": {"box_data": box_data, "class_labels": names}} # inference-space wandb_images.append(wandb.Image(img[si], boxes=boxes, caption=path.name)) # Append to pycocotools JSON dictionary if save_json: # [{"image_id": 42, "category_id": 18, "bbox": [258.15, 41.29, 348.26, 243.78], "score": 0.236}, ... image_id = int(path.stem) if path.stem.isnumeric() else path.stem box = xyxy2xywh(predn[:, :4]) # xywh box[:, :2] -= box[:, 2:] / 2 # xy center to top-left corner for p, b in zip(pred.tolist(), box.tolist()): jdict.append({'image_id': image_id, 'category_id': coco91class[int(p[15])] if is_coco else int(p[15]), 'bbox': [round(x, 3) for x in b], 'score': round(p[4], 5)}) # Assign all predictions as incorrect correct = torch.zeros(pred.shape[0], niou, dtype=torch.bool, device=device) if nl: detected = [] # target indices tcls_tensor = labels[:, 0] # target boxes tbox = xywh2xyxy(labels[:, 1:5]) scale_boxes(img[si].shape[1:], tbox, shapes[si][0], shapes[si][1]) # native-space labels if plots: confusion_matrix.process_batch(pred, torch.cat((labels[:, 0:1], tbox), 1)) # Per target class for cls in torch.unique(tcls_tensor): ti = (cls == tcls_tensor).nonzero(as_tuple=False).view(-1) # prediction indices pi = (cls == pred[:, 5]).nonzero(as_tuple=False).view(-1) # target indices # Search for detections if pi.shape[0]: # Prediction to target ious ious, i = box_iou(predn[pi, :4], tbox[ti]).max(1) # best ious, indices # Append detections detected_set = set() for j in (ious > iouv[0]).nonzero(as_tuple=False): d = ti[i[j]] # detected target if d.item() not in detected_set: detected_set.add(d.item()) detected.append(d) correct[pi[j]] = ious[j] > iouv # iou_thres is 1xn if len(detected) == nl: # all targets already located in image break # Append statistics (correct, conf, pcls, tcls) stats.append((correct.cpu(), pred[:, 4].cpu(), pred[:, 5].cpu(), tcls)) # Plot images if plots and batch_i < 3: plot_imagesf(img, targets, paths, save_dir / f'val_batch{batch_i}_labels.jpg', names) # labels plot_imagesf(img, output_to_targetf(output), paths, save_dir / f'val_batch{batch_i}_pred.jpg', names) # pred # Compute statistics stats = [np.concatenate(x, 0) for x in zip(*stats)] # to numpy if len(stats) and stats[0].any(): p, r, ap, f1, ap_class = ap_per_classf(*stats, plot=plots, save_dir=save_dir, names=names) p, r, ap50, ap = p[:, 0], r[:, 0], ap[:, 0], ap.mean(1) # [P, R, AP@0.5, AP@0.5:0.95] mp, mr, map50, map = p.mean(), r.mean(), ap50.mean(), ap.mean() nt = np.bincount(stats[3].astype(np.int64), minlength=nc) # number of targets per class else: nt = torch.zeros(1) # Print results pf = '%20s' + '%12.3g' * 6 # print format print(pf % ('all', seen, nt.sum(), mp, mr, map50, map)) # Print results per class if verbose and nc > 1 and len(stats): for i, c in enumerate(ap_class): print(pf % (names[c], seen, nt[c], p[i], r[i], ap50[i], ap[i])) # Print speeds t = tuple(x / seen * 1E3 for x in (t0, t1, t0 + t1)) + (imgsz, imgsz, batch_size) # tuple if not training: print('Speed: %.1f/%.1f/%.1f ms inference/NMS/total per %gx%g image at batch-size %g' % t) # Plots if plots: confusion_matrix.plot(save_dir=save_dir, names=list(names.values())) if wandb and wandb.run: wandb.log({"Images": wandb_images}) wandb.log({"Validation": [wandb.Image(str(f), caption=f.name) for f in sorted(save_dir.glob('test*.jpg'))]}) # Save JSON if save_json and len(jdict): w = Path(weights[0] if isinstance(weights, list) else weights).stem if weights is not None else '' # weights anno_json = '../coco/annotations/instances_val2017.json' # annotations json pred_json = str(save_dir / f"{w}_predictions.json") # predictions json print('\nEvaluating pycocotools mAP... saving %s...' % pred_json) with open(pred_json, 'w') as f: json.dump(jdict, f) try: # https://github.com/cocodataset/cocoapi/blob/master/PythonAPI/pycocoEvalDemo.ipynb from pycocotools.coco import COCO from pycocotools.cocoeval import COCOeval anno = COCO(anno_json) # init annotations api pred = anno.loadRes(pred_json) # init predictions api eval = COCOeval(anno, pred, 'bbox') if is_coco: eval.params.imgIds = [int(Path(x).stem) for x in dataloader.dataset.img_files] # image IDs to evaluate eval.evaluate() eval.accumulate() eval.summarize() map, map50 = eval.stats[:2] # update results (mAP@0.5:0.95, mAP@0.5) except Exception as e: print(f'pycocotools unable to run: {e}') # Return results if not training: s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else '' print(f"Results saved to {save_dir}{s}") model.float() # for training maps = np.zeros(nc) + map for i, c in enumerate(ap_class): maps[c] = ap[i] return (mp, mr, map50, map, *(loss.cpu() / len(dataloader)).tolist()), maps, t if __name__ == '__main__': parser = argparse.ArgumentParser(prog='test.py') parser.add_argument('--weights', nargs='+', type=str, default=ROOT / 'yolov5n-lmk.pt', help='model.pt path(s)') parser.add_argument('--data', type=str, default=ROOT / 'data/widerface.yaml', help='*.data path') parser.add_argument('--batch-size', type=int, default=32, help='size of each image batch') parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)') parser.add_argument('--conf-thres', type=float, default=0.001, help='object confidence threshold') parser.add_argument('--iou-thres', type=float, default=0.6, help='IOU threshold for NMS') parser.add_argument('--task', default='val', help="'val', 'test', 'study'") parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu') parser.add_argument('--single-cls', action='store_true', help='treat as single-class dataset') parser.add_argument('--augment', action='store_true', help='augmented inference') parser.add_argument('--verbose', action='store_true', help='report mAP by class') parser.add_argument('--save-txt', action='store_true', help='save results to *.txt') parser.add_argument('--save-hybrid', action='store_true', help='save label+prediction hybrid results to *.txt') parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels') parser.add_argument('--save-json', action='store_true', help='save a cocoapi-compatible JSON results file') parser.add_argument('--project', default='runs/test', help='save to project/name') parser.add_argument('--name', default='exp', help='save to project/name') parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment') opt = parser.parse_args() opt.save_json |= opt.data.endswith('coco.yaml') opt.data = check_file(opt.data) # check file print(opt) if opt.task in ['val', 'test']: # run normally test(opt.data, opt.weights, opt.batch_size, opt.img_size, opt.conf_thres, opt.iou_thres, opt.save_json, opt.single_cls, opt.augment, opt.verbose, save_txt=opt.save_txt | opt.save_hybrid, save_hybrid=opt.save_hybrid, save_conf=opt.save_conf, ) elif opt.task == 'study': # run over a range of settings and save/plot for weights in ['yolov5s.pt', 'yolov5m.pt', 'yolov5l.pt', 'yolov5x.pt']: f = 'study_%s_%s.txt' % (Path(opt.data).stem, Path(weights).stem) # filename to save to x = list(range(320, 800, 64)) # x axis y = [] # y axis for i in x: # img-size print('\nRunning %s point %s...' % (f, i)) r, _, t = test(opt.data, weights, opt.batch_size, i, opt.conf_thres, opt.iou_thres, opt.save_json, plots=False) y.append(r + t) # results and times np.savetxt(f, y, fmt='%10.4g') # save os.system('zip -r study.zip study_*.txt') plot_study_txt(f, x) # plotlandmark/predict.py

import argparseimport osimport platformimport sysfrom pathlib import Path import torch FILE = Path(__file__).resolve()ROOT = FILE.parents[1] # YOLOv5 root directoryif str(ROOT) not in sys.path: sys.path.append(str(ROOT)) # add ROOT to PATHROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative from models.common import DetectMultiBackendfrom utils.dataloaders import IMG_FORMATS, VID_FORMATS, LoadImages, LoadScreenshots, LoadStreamsfrom utils.general import (LOGGER, Profile, check_file, check_img_size, check_imshow, check_requirements, colorstr, cv2, increment_path, print_args, scale_boxes,strip_optimizer)from utils.landmark.general import non_max_suppression_face,scale_coords_landmarksfrom utils.plots import Annotator, colors, save_one_boxfrom utils.torch_utils import select_device, smart_inference_mode @smart_inference_mode()def run( weights=ROOT / 'yolov5n-lmk.pt', # model.pt path(s) source=ROOT / 'data/images', # file/dir/URL/glob/screen/0(webcam) data=ROOT / 'data/coco128.yaml', # dataset.yaml path imgsz=(640, 640), # inference size (height, width) conf_thres=0.25, # confidence threshold iou_thres=0.45, # NMS IOU threshold max_det=1000, # maximum detections per image device='', # cuda device, i.e. 0 or 0,1,2,3 or cpu view_img=False, # show results save_txt=False, # save results to *.txt save_conf=False, # save confidences in --save-txt labels save_crop=False, # save cropped prediction boxes nosave=False, # do not save images/videos classes=None, # filter by class: --class 0, or --class 0 2 3 agnostic_nms=False, # class-agnostic NMS augment=False, # augmented inference visualize=False, # visualize features update=False, # update all models project=ROOT / 'runs/predict-lmk', # save results to project/name name='exp', # save results to project/name exist_ok=False, # existing project/name ok, do not increment line_thickness=3, # bounding box thickness (pixels) hide_labels=False, # hide labels hide_conf=False, # hide confidences half=False, # use FP16 half-precision inference dnn=False, # use OpenCV DNN for ONNX inference vid_stride=1, # video frame-rate stride): source = str(source) save_img = not nosave and not source.endswith('.txt') # save inference images is_file = Path(source).suffix[1:] in (IMG_FORMATS + VID_FORMATS) is_url = source.lower().startswith(('rtsp://', 'rtmp://', 'http://', 'https://')) webcam = source.isnumeric() or source.endswith('.txt') or (is_url and not is_file) screenshot = source.lower().startswith('screen') if is_url and is_file: source = check_file(source) # download # Directories save_dir = increment_path(Path(project) / name, exist_ok=exist_ok) # increment run (save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir # Load model device = select_device(device) model = DetectMultiBackend(weights, device=device, dnn=dnn, data=data, fp16=half) stride, names, pt = model.stride, model.names, model.pt imgsz = check_img_size(imgsz, s=stride) # check image size # Dataloader bs = 1 # batch_size if webcam: view_img = check_imshow() dataset = LoadStreams(source, img_size=imgsz, stride=stride, auto=pt, vid_stride=vid_stride) bs = len(dataset) elif screenshot: dataset = LoadScreenshots(source, img_size=imgsz, stride=stride, auto=pt) else: dataset = LoadImages(source, img_size=imgsz, stride=stride, auto=pt, vid_stride=vid_stride) vid_path, vid_writer = [None] * bs, [None] * bs # Run inference model.warmup(imgsz=(1 if pt else bs, 3, *imgsz)) # warmup seen, windows, dt = 0, [], (Profile(), Profile(), Profile()) for path, im, im0s, vid_cap, s in dataset: with dt[0]: im = torch.from_numpy(im).to(model.device) im = im.half() if model.fp16 else im.float() # uint8 to fp16/32 im /= 255 # 0 - 255 to 0.0 - 1.0 if len(im.shape) == 3: im = im[None] # expand for batch dim # Inference with dt[1]: visualize = increment_path(save_dir / Path(path).stem, mkdir=True) if visualize else False pred, proto = model(im, augment=augment, visualize=visualize)[:2] # NMS with dt[2]: pred = non_max_suppression_face(pred, conf_thres, iou_thres) # Second-stage classifier (optional) # pred = utils.general.apply_classifier(pred, classifier_model, im, im0s) # Process predictions for i, det in enumerate(pred): # per image seen += 1 if webcam: # batch_size >= 1 p, im0, frame = path[i], im0s[i].copy(), dataset.count s += f'{i}: ' else: p, im0, frame = path, im0s.copy(), getattr(dataset, 'frame', 0) p = Path(p) # to Path save_path = str(save_dir / p.name) # im.jpg txt_path = str(save_dir / 'labels' / p.stem) + ('' if dataset.mode == 'image' else f'_{frame}') # im.txt s += '%gx%g ' % im.shape[2:] # print string imc = im0.copy() if save_crop else im0 # for save_crop annotator = Annotator(im0, line_width=line_thickness, example=str(names)) if len(det): # Rescale boxes from img_size to im0 size det[:, :4] = scale_boxes(im.shape[2:], det[:, :4], im0.shape).round() # Print results for c in det[:, 5].unique(): n = (det[:, 5] == c).sum() # detections per class # s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string det[:, 5:15] = scale_coords_landmarks(im.shape[2:], det[:, 5:15], im0.shape).round() # Write results for j in range(det.size()[0]): xyxy = det[j, :4].view(-1).tolist() conf = det[j, 4].cpu().detach().numpy() landmarks = det[j, 5:15].view(-1).tolist() cls = det[j, 15].cpu().detach().numpy() if save_txt: line = (cls, *xyxy, conf) if save_conf else (cls, *xyxy) with open(f'{txt_path}.txt', 'a') as f: f.write(('%g ' * len(line)).rstrip() % line + '\n') if save_img or save_crop or view_img: # Add bbox to image c = int(cls) # integer class label = None if hide_labels else (names[c] if hide_conf else f'{names[c]} {conf:.2f}') annotator.box_label(xyxy, label, color=colors(c, True)) annotator.landmark(landmarks) if save_crop: save_one_box(xyxy, imc, file=save_dir / 'crops' / names[c] / f'{p.stem}.jpg', BGR=True) # Stream results im0 = annotator.result() if view_img: if platform.system() == 'Linux' and p not in windows: windows.append(p) cv2.namedWindow(str(p), cv2.WINDOW_NORMAL | cv2.WINDOW_KEEPRATIO) # allow window resize (Linux) cv2.resizeWindow(str(p), im0.shape[1], im0.shape[0]) cv2.imshow(str(p), im0) cv2.waitKey(1) # 1 millisecond # Save results (image with detections) if save_img: if dataset.mode == 'image': cv2.imwrite(save_path, im0) else: # 'video' or 'stream' if vid_path[i] != save_path: # new video vid_path[i] = save_path if isinstance(vid_writer[i], cv2.VideoWriter): vid_writer[i].release() # release previous video writer if vid_cap: # video fps = vid_cap.get(cv2.CAP_PROP_FPS) w = int(vid_cap.get(cv2.CAP_PROP_FRAME_WIDTH)) h = int(vid_cap.get(cv2.CAP_PROP_FRAME_HEIGHT)) else: # stream fps, w, h = 30, im0.shape[1], im0.shape[0] save_path = str(Path(save_path).with_suffix('.mp4')) # force *.mp4 suffix on results videos vid_writer[i] = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h)) vid_writer[i].write(im0) # Print time (inference-only) LOGGER.info(f"{s}{'' if len(det) else '(no detections), '}{dt[1].dt * 1E3:.1f}ms") # Print results t = tuple(x.t / seen * 1E3 for x in dt) # speeds per image LOGGER.info(f'Speed: %.1fms pre-process, %.1fms inference, %.1fms NMS per image at shape {(1, 3, *imgsz)}' % t) if save_txt or save_img: s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else '' LOGGER.info(f"Results saved to {colorstr('bold', save_dir)}{s}") if update: strip_optimizer(weights[0]) # update model (to fix SourceChangeWarning) def parse_opt(): parser = argparse.ArgumentParser() parser.add_argument('--weights', nargs='+', type=str, default=ROOT / 'yolov5n-lmk.pt', help='model path(s)') parser.add_argument('--source', type=str, default=ROOT / 'data/images', help='file/dir/URL/glob/screen/0(webcam)') parser.add_argument('--data', type=str, default=ROOT / 'data/widerface.yaml', help='(optional) dataset.yaml path') parser.add_argument('--imgsz', '--img', '--img-size', nargs='+', type=int, default=[640], help='inference size h,w') parser.add_argument('--conf-thres', type=float, default=0.25, help='confidence threshold') parser.add_argument('--iou-thres', type=float, default=0.45, help='NMS IoU threshold') parser.add_argument('--max-det', type=int, default=1000, help='maximum detections per image') parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu') parser.add_argument('--view-img', action='store_true', help='show results') parser.add_argument('--save-txt', action='store_true', help='save results to *.txt') parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels') parser.add_argument('--save-crop', action='store_true', help='save cropped prediction boxes') parser.add_argument('--nosave', action='store_true', help='do not save images/videos') parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --classes 0, or --classes 0 2 3') parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS') parser.add_argument('--augment', action='store_true', help='augmented inference') parser.add_argument('--visualize', action='store_true', help='visualize features') parser.add_argument('--update', action='store_true', help='update all models') parser.add_argument('--project', default=ROOT / 'runs/predict-lmk', help='save results to project/name') parser.add_argument('--name', default='exp', help='save results to project/name') parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment') parser.add_argument('--line-thickness', default=3, type=int, help='bounding box thickness (pixels)') parser.add_argument('--hide-labels', default=False, action='store_true', help='hide labels') parser.add_argument('--hide-conf', default=False, action='store_true', help='hide confidences') parser.add_argument('--half', action='store_true', help='use FP16 half-precision inference') parser.add_argument('--dnn', action='store_true', help='use OpenCV DNN for ONNX inference') parser.add_argument('--vid-stride', type=int, default=1, help='video frame-rate stride') opt = parser.parse_args() opt.imgsz *= 2 if len(opt.imgsz) == 1 else 1 # expand print_args(vars(opt)) return opt def main(opt): check_requirements(exclude=('tensorboard', 'thop')) run(**vars(opt)) if __name__ == "__main__": opt = parse_opt() main(opt) landmark/export.py

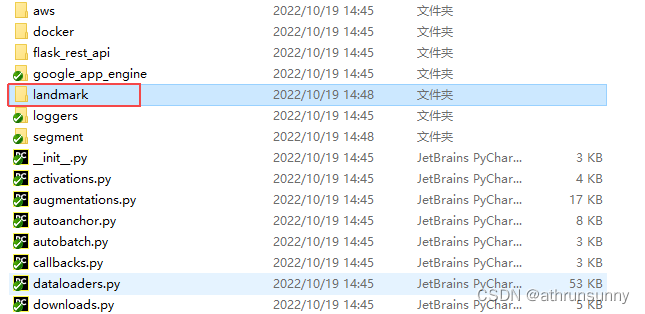

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license"""Export a YOLOv5 PyTorch model to other formats. TensorFlow exports authored by https://github.com/zldrobitFormat | `export.py --include` | Model--- | --- | ---PyTorch | - | yolov5s.ptTorchScript | `torchscript` | yolov5s.torchscriptONNX | `onnx` | yolov5s.onnxOpenVINO | `openvino` | yolov5s_openvino_model/TensorRT | `engine` | yolov5s.engineCoreML | `coreml` | yolov5s.mlmodelTensorFlow SavedModel | `saved_model` | yolov5s_saved_model/TensorFlow GraphDef | `pb` | yolov5s.pbTensorFlow Lite | `tflite` | yolov5s.tfliteTensorFlow Edge TPU | `edgetpu` | yolov5s_edgetpu.tfliteTensorFlow.js | `tfjs` | yolov5s_web_model/PaddlePaddle | `paddle` | yolov5s_paddle_model/Requirements: $ pip install -r requirements.txt coremltools onnx onnx-simplifier onnxruntime openvino-dev tensorflow-cpu # CPU $ pip install -r requirements.txt coremltools onnx onnx-simplifier onnxruntime-gpu openvino-dev tensorflow # GPUUsage: $ python export.py --weights yolov5s.pt --include torchscript onnx openvino engine coreml tflite ...Inference: $ python detect.py --weights yolov5s.pt # PyTorch yolov5s.torchscript # TorchScript yolov5s.onnx # ONNX Runtime or OpenCV DNN with --dnn yolov5s.xml # OpenVINO yolov5s.engine # TensorRT yolov5s.mlmodel # CoreML (macOS-only) yolov5s_saved_model # TensorFlow SavedModel yolov5s.pb # TensorFlow GraphDef yolov5s.tflite # TensorFlow Lite yolov5s_edgetpu.tflite # TensorFlow Edge TPU yolov5s_paddle_model # PaddlePaddleTensorFlow.js: $ cd .. && git clone https://github.com/zldrobit/tfjs-yolov5-example.git && cd tfjs-yolov5-example $ npm install $ ln -s ../../yolov5/yolov5s_web_model public/yolov5s_web_model $ npm start""" import argparseimport jsonimport osimport platformimport reimport subprocessimport sysimport timeimport warningsfrom pathlib import Path import pandas as pdimport torchimport torch.nn as nnfrom torch.utils.mobile_optimizer import optimize_for_mobile FILE = Path(__file__).resolve()ROOT = FILE.parents[1] # YOLOv5 root directoryif str(ROOT) not in sys.path: sys.path.append(str(ROOT)) # add ROOT to PATHif platform.system() != 'Windows': ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative from models.experimental import attempt_loadffrom models.yolo import ClassificationModel, Detect, DetectionModel, SegmentationModel, LmkDetectfrom utils.dataloaders import LoadImagesfrom utils.general import (LOGGER, Profile, check_dataset, check_img_size, check_requirements, check_version, check_yaml, colorstr, file_size, get_default_args, print_args, url2file, yaml_save)from utils.torch_utils import select_device, smart_inference_mode MACOS = platform.system() == 'Darwin' # macOS environment def export_formats(): # YOLOv5 export formats x = [ ['PyTorch', '-', '.pt', True, True], ['TorchScript', 'torchscript', '.torchscript', True, True], ['ONNX', 'onnx', '.onnx', True, True], ['OpenVINO', 'openvino', '_openvino_model', True, False], ['TensorRT', 'engine', '.engine', False, True], ['CoreML', 'coreml', '.mlmodel', True, False], ['TensorFlow SavedModel', 'saved_model', '_saved_model', True, True], ['TensorFlow GraphDef', 'pb', '.pb', True, True], ['TensorFlow Lite', 'tflite', '.tflite', True, False], ['TensorFlow Edge TPU', 'edgetpu', '_edgetpu.tflite', False, False], ['TensorFlow.js', 'tfjs', '_web_model', False, False], ['PaddlePaddle', 'paddle', '_paddle_model', True, True], ] return pd.DataFrame(x, columns=['Format', 'Argument', 'Suffix', 'CPU', 'GPU']) def try_export(inner_func): # YOLOv5 export decorator, i..e @try_export inner_args = get_default_args(inner_func) def outer_func(*args, **kwargs): prefix = inner_args['prefix'] try: with Profile() as dt: f, model = inner_func(*args, **kwargs) LOGGER.info(f'{prefix} export success ✅ {dt.t:.1f}s, saved as {f} ({file_size(f):.1f} MB)') return f, model except Exception as e: LOGGER.info(f'{prefix} export failure ❌ {dt.t:.1f}s: {e}') return None, None return outer_func @try_exportdef export_torchscript(model, im, file, optimize, prefix=colorstr('TorchScript:')): # YOLOv5 TorchScript model export LOGGER.info(f'\n{prefix} starting export with torch {torch.__version__}...') f = file.with_suffix('.torchscript') ts = torch.jit.trace(model, im, strict=False) d = {"shape": im.shape, "stride": int(max(model.stride)), "names": model.names} extra_files = {'config.txt': json.dumps(d)} # torch._C.ExtraFilesMap() if optimize: # https://pytorch.org/tutorials/recipes/mobile_interpreter.html optimize_for_mobile(ts)._save_for_lite_interpreter(str(f), _extra_files=extra_files) else: ts.save(str(f), _extra_files=extra_files) return f, None @try_exportdef export_onnx(model, im, file, opset, dynamic, simplify, prefix=colorstr('ONNX:')): # YOLOv5 ONNX export check_requirements('onnx') import onnx LOGGER.info(f'\n{prefix} starting export with onnx {onnx.__version__}...') f = file.with_suffix('.onnx') output_names = ['output0', 'output1'] if isinstance(model, SegmentationModel) else ['output0'] if dynamic: dynamic = {'images': {0: 'batch', 2: 'height', 3: 'width'}} # shape(1,3,640,640) if isinstance(model, SegmentationModel): dynamic['output0'] = {0: 'batch', 1: 'anchors'} # shape(1,25200,85) dynamic['output1'] = {0: 'batch', 2: 'mask_height', 3: 'mask_width'} # shape(1,32,160,160) elif isinstance(model, DetectionModel): dynamic['output0'] = {0: 'batch', 1: 'anchors'} # shape(1,25200,85) torch.onnx.export( model.cpu() if dynamic else model, # --dynamic only compatible with cpu im.cpu() if dynamic else im, f, verbose=False, opset_version=opset, do_constant_folding=True, input_names=['images'], output_names=output_names, dynamic_axes=dynamic or None) # Checks model_onnx = onnx.load(f) # load onnx model onnx.checker.check_model(model_onnx) # check onnx model # Metadata d = {'stride': int(max(model.stride)), 'names': model.names} for k, v in d.items(): meta = model_onnx.metadata_props.add() meta.key, meta.value = k, str(v) onnx.save(model_onnx, f) # Simplify if simplify: try: cuda = torch.cuda.is_available() check_requirements(('onnxruntime-gpu' if cuda else 'onnxruntime', 'onnx-simplifier>=0.4.1')) import onnxsim LOGGER.info(f'{prefix} simplifying with onnx-simplifier {onnxsim.__version__}...') model_onnx, check = onnxsim.simplify(model_onnx) assert check, 'assert check failed' onnx.save(model_onnx, f) except Exception as e: LOGGER.info(f'{prefix} simplifier failure: {e}') return f, model_onnx @try_exportdef export_openvino(file, metadata, half, prefix=colorstr('OpenVINO:')): # YOLOv5 OpenVINO export check_requirements('openvino-dev') # requires openvino-dev: https://pypi.org/project/openvino-dev/ import openvino.inference_engine as ie LOGGER.info(f'\n{prefix} starting export with openvino {ie.__version__}...') f = str(file).replace('.pt', f'_openvino_model{os.sep}') cmd = f"mo --input_model {file.with_suffix('.onnx')} --output_dir {f} --data_type {'FP16' if half else 'FP32'}" subprocess.run(cmd.split(), check=True, env=os.environ) # export yaml_save(Path(f) / file.with_suffix('.yaml').name, metadata) # add metadata.yaml return f, None @try_exportdef export_paddle(model, im, file, metadata, prefix=colorstr('PaddlePaddle:')): # YOLOv5 Paddle export check_requirements(('paddlepaddle', 'x2paddle')) import x2paddle from x2paddle.convert import pytorch2paddle LOGGER.info(f'\n{prefix} starting export with X2Paddle {x2paddle.__version__}...') f = str(file).replace('.pt', f'_paddle_model{os.sep}') pytorch2paddle(module=model, save_dir=f, jit_type='trace', input_examples=[im]) # export yaml_save(Path(f) / file.with_suffix('.yaml').name, metadata) # add metadata.yaml return f, None @try_exportdef export_coreml(model, im, file, int8, half, prefix=colorstr('CoreML:')): # YOLOv5 CoreML export check_requirements('coremltools') import coremltools as ct LOGGER.info(f'\n{prefix} starting export with coremltools {ct.__version__}...') f = file.with_suffix('.mlmodel') ts = torch.jit.trace(model, im, strict=False) # TorchScript model ct_model = ct.convert(ts, inputs=[ct.ImageType('image', shape=im.shape, scale=1 / 255, bias=[0, 0, 0])]) bits, mode = (8, 'kmeans_lut') if int8 else (16, 'linear') if half else (32, None) if bits < 32: if MACOS: # quantization only supported on macOS with warnings.catch_warnings(): warnings.filterwarnings("ignore", category=DeprecationWarning) # suppress numpy==1.20 float warning ct_model = ct.models.neural_network.quantization_utils.quantize_weights(ct_model, bits, mode) else: print(f'{prefix} quantization only supported on macOS, skipping...') ct_model.save(f) return f, ct_model @try_exportdef export_engine(model, im, file, half, dynamic, simplify, workspace=4, verbose=False, prefix=colorstr('TensorRT:')): # YOLOv5 TensorRT export https://developer.nvidia.com/tensorrt assert im.device.type != 'cpu', 'export running on CPU but must be on GPU, i.e. `python export.py --device 0`' try: import tensorrt as trt except Exception: if platform.system() == 'Linux': check_requirements('nvidia-tensorrt', cmds='-U --index-url https://pypi.ngc.nvidia.com') import tensorrt as trt if trt.__version__[0] == '7': # TensorRT 7 handling https://github.com/ultralytics/yolov5/issues/6012 grid = model.model[-1].anchor_grid model.model[-1].anchor_grid = [a[..., :1, :1, :] for a in grid] export_onnx(model, im, file, 12, dynamic, simplify) # opset 12 model.model[-1].anchor_grid = grid else: # TensorRT >= 8 check_version(trt.__version__, '8.0.0', hard=True) # require tensorrt>=8.0.0 export_onnx(model, im, file, 12, dynamic, simplify) # opset 12 onnx = file.with_suffix('.onnx') LOGGER.info(f'\n{prefix} starting export with TensorRT {trt.__version__}...') assert onnx.exists(), f'failed to export ONNX file: {onnx}' f = file.with_suffix('.engine') # TensorRT engine file logger = trt.Logger(trt.Logger.INFO) if verbose: logger.min_severity = trt.Logger.Severity.VERBOSE builder = trt.Builder(logger) config = builder.create_builder_config() config.max_workspace_size = workspace * 1 << 30 # config.set_memory_pool_limit(trt.MemoryPoolType.WORKSPACE, workspace << 30) # fix TRT 8.4 deprecation notice flag = (1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH)) network = builder.create_network(flag) parser = trt.OnnxParser(network, logger) if not parser.parse_from_file(str(onnx)): raise RuntimeError(f'failed to load ONNX file: {onnx}') inputs = [network.get_input(i) for i in range(network.num_inputs)] outputs = [network.get_output(i) for i in range(network.num_outputs)] for inp in inputs: LOGGER.info(f'{prefix} input "{inp.name}" with shape{inp.shape} {inp.dtype}') for out in outputs: LOGGER.info(f'{prefix} output "{out.name}" with shape{out.shape} {out.dtype}') if dynamic: if im.shape[0] <= 1: LOGGER.warning(f"{prefix} WARNING ⚠️ --dynamic model requires maximum --batch-size argument") profile = builder.create_optimization_profile() for inp in inputs: profile.set_shape(inp.name, (1, *im.shape[1:]), (max(1, im.shape[0] // 2), *im.shape[1:]), im.shape) config.add_optimization_profile(profile) LOGGER.info(f'{prefix} building FP{16 if builder.platform_has_fast_fp16 and half else 32} engine as {f}') if builder.platform_has_fast_fp16 and half: config.set_flag(trt.BuilderFlag.FP16) with builder.build_engine(network, config) as engine, open(f, 'wb') as t: t.write(engine.serialize()) return f, None @try_exportdef export_saved_model(model, im, file, dynamic, tf_nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25, keras=False, prefix=colorstr('TensorFlow SavedModel:')): # YOLOv5 TensorFlow SavedModel export try: import tensorflow as tf except Exception: check_requirements(f"tensorflow{'' if torch.cuda.is_available() else '-macos' if MACOS else '-cpu'}") import tensorflow as tf from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2 from models.tf import TFModel LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...') f = str(file).replace('.pt', '_saved_model') batch_size, ch, *imgsz = list(im.shape) # BCHW tf_model = TFModel(cfg=model.yaml, model=model, nc=model.nc, imgsz=imgsz) im = tf.zeros((batch_size, *imgsz, ch)) # BHWC order for TensorFlow _ = tf_model.predict(im, tf_nms, agnostic_nms, topk_per_class, topk_all, iou_thres, conf_thres) inputs = tf.keras.Input(shape=(*imgsz, ch), batch_size=None if dynamic else batch_size) outputs = tf_model.predict(inputs, tf_nms, agnostic_nms, topk_per_class, topk_all, iou_thres, conf_thres) keras_model = tf.keras.Model(inputs=inputs, outputs=outputs) keras_model.trainable = False keras_model.summary() if keras: keras_model.save(f, save_format='tf') else: spec = tf.TensorSpec(keras_model.inputs[0].shape, keras_model.inputs[0].dtype) m = tf.function(lambda x: keras_model(x)) # full model m = m.get_concrete_function(spec) frozen_func = convert_variables_to_constants_v2(m) tfm = tf.Module() tfm.__call__ = tf.function(lambda x: frozen_func(x)[:4] if tf_nms else frozen_func(x), [spec]) tfm.__call__(im) tf.saved_model.save(tfm, f, options=tf.saved_model.SaveOptions(experimental_custom_gradients=False) if check_version( tf.__version__, '2.6') else tf.saved_model.SaveOptions()) return f, keras_model @try_exportdef export_pb(keras_model, file, prefix=colorstr('TensorFlow GraphDef:')): # YOLOv5 TensorFlow GraphDef *.pb export https://github.com/leimao/Frozen_Graph_TensorFlow import tensorflow as tf from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2 LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...') f = file.with_suffix('.pb') m = tf.function(lambda x: keras_model(x)) # full model m = m.get_concrete_function(tf.TensorSpec(keras_model.inputs[0].shape, keras_model.inputs[0].dtype)) frozen_func = convert_variables_to_constants_v2(m) frozen_func.graph.as_graph_def() tf.io.write_graph(graph_or_graph_def=frozen_func.graph, logdir=str(f.parent), name=f.name, as_text=False) return f, None @try_exportdef export_tflite(keras_model, im, file, int8, data, nms, agnostic_nms, prefix=colorstr('TensorFlow Lite:')): # YOLOv5 TensorFlow Lite export import tensorflow as tf LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...') batch_size, ch, *imgsz = list(im.shape) # BCHW f = str(file).replace('.pt', '-fp16.tflite') converter = tf.lite.TFLiteConverter.from_keras_model(keras_model) converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS] converter.target_spec.supported_types = [tf.float16] converter.optimizations = [tf.lite.Optimize.DEFAULT] if int8: from models.tf import representative_dataset_gen dataset = LoadImages(check_dataset(check_yaml(data))['train'], img_size=imgsz, auto=False) converter.representative_dataset = lambda: representative_dataset_gen(dataset, ncalib=100) converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS_INT8] converter.target_spec.supported_types = [] converter.inference_input_type = tf.uint8 # or tf.int8 converter.inference_output_type = tf.uint8 # or tf.int8 converter.experimental_new_quantizer = True f = str(file).replace('.pt', '-int8.tflite') if nms or agnostic_nms: converter.target_spec.supported_ops.append(tf.lite.OpsSet.SELECT_TF_OPS) tflite_model = converter.convert() open(f, "wb").write(tflite_model) return f, None @try_exportdef export_edgetpu(file, prefix=colorstr('Edge TPU:')): # YOLOv5 Edge TPU export https://coral.ai/docs/edgetpu/models-intro/ cmd = 'edgetpu_compiler --version' help_url = 'https://coral.ai/docs/edgetpu/compiler/' assert platform.system() == 'Linux', f'export only supported on Linux. See {help_url}' if subprocess.run(f'{cmd} >/dev/null', shell=True).returncode != 0: LOGGER.info(f'\n{prefix} export requires Edge TPU compiler. Attempting install from {help_url}') sudo = subprocess.run('sudo --version >/dev/null', shell=True).returncode == 0 # sudo installed on system for c in ( 'curl https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -', 'echo "deb https://packages.cloud.google.com/apt coral-edgetpu-stable main" | sudo tee /etc/apt/sources.list.d/coral-edgetpu.list', 'sudo apt-get update', 'sudo apt-get install edgetpu-compiler'): subprocess.run(c if sudo else c.replace('sudo ', ''), shell=True, check=True) ver = subprocess.run(cmd, shell=True, capture_output=True, check=True).stdout.decode().split()[-1] LOGGER.info(f'\n{prefix} starting export with Edge TPU compiler {ver}...') f = str(file).replace('.pt', '-int8_edgetpu.tflite') # Edge TPU model f_tfl = str(file).replace('.pt', '-int8.tflite') # TFLite model cmd = f"edgetpu_compiler -s -d -k 10 --out_dir {file.parent} {f_tfl}" subprocess.run(cmd.split(), check=True) return f, None @try_exportdef export_tfjs(file, prefix=colorstr('TensorFlow.js:')): # YOLOv5 TensorFlow.js export check_requirements('tensorflowjs') import tensorflowjs as tfjs LOGGER.info(f'\n{prefix} starting export with tensorflowjs {tfjs.__version__}...') f = str(file).replace('.pt', '_web_model') # js dir f_pb = file.with_suffix('.pb') # *.pb path f_json = f'{f}/model.json' # *.json path cmd = f'tensorflowjs_converter --input_format=tf_frozen_model ' \ f'--output_node_names=Identity,Identity_1,Identity_2,Identity_3 {f_pb} {f}' subprocess.run(cmd.split()) json = Path(f_json).read_text() with open(f_json, 'w') as j: # sort JSON Identity_* in ascending order subst = re.sub( r'{"outputs": {"Identity.?.?": {"name": "Identity.?.?"}, ' r'"Identity.?.?": {"name": "Identity.?.?"}, ' r'"Identity.?.?": {"name": "Identity.?.?"}, ' r'"Identity.?.?": {"name": "Identity.?.?"}}}', r'{"outputs": {"Identity": {"name": "Identity"}, ' r'"Identity_1": {"name": "Identity_1"}, ' r'"Identity_2": {"name": "Identity_2"}, ' r'"Identity_3": {"name": "Identity_3"}}}', json) j.write(subst) return f, None @smart_inference_mode()def run( data=ROOT / 'data/coco128.yaml', # 'dataset.yaml path' weights=ROOT / 'yolov5s.pt', # weights path imgsz=(640, 640), # image (height, width) batch_size=1, # batch size device='cpu', # cuda device, i.e. 0 or 0,1,2,3 or cpu include=('torchscript', 'onnx'), # include formats half=False, # FP16 half-precision export inplace=False, # set YOLOv5 Detect() inplace=True keras=False, # use Keras optimize=False, # TorchScript: optimize for mobile int8=False, # CoreML/TF INT8 quantization dynamic=False, # ONNX/TF/TensorRT: dynamic axes simplify=False, # ONNX: simplify model opset=12, # ONNX: opset version verbose=False, # TensorRT: verbose log workspace=4, # TensorRT: workspace size (GB) nms=False, # TF: add NMS to model agnostic_nms=False, # TF: add agnostic NMS to model topk_per_class=100, # TF.js NMS: topk per class to keep topk_all=100, # TF.js NMS: topk for all classes to keep iou_thres=0.45, # TF.js NMS: IoU threshold conf_thres=0.25, # TF.js NMS: confidence threshold): t = time.time() include = [x.lower() for x in include] # to lowercase fmts = tuple(export_formats()['Argument'][1:]) # --include arguments flags = [x in include for x in fmts] assert sum(flags) == len(include), f'ERROR: Invalid --include {include}, valid --include arguments are {fmts}' jit, onnx, xml, engine, coreml, saved_model, pb, tflite, edgetpu, tfjs, paddle = flags # export booleans file = Path(url2file(weights) if str(weights).startswith(('http:/', 'https:/')) else weights) # PyTorch weights # Load PyTorch model device = select_device(device) if half: assert device.type != 'cpu' or coreml, '--half only compatible with GPU export, i.e. use --device 0' assert not dynamic, '--half not compatible with --dynamic, i.e. use either --half or --dynamic but not both' model = attempt_loadf(weights, device=device, inplace=True, fuse=True) # load FP32 model # Checks imgsz *= 2 if len(imgsz) == 1 else 1 # expand if optimize: assert device.type == 'cpu', '--optimize not compatible with cuda devices, i.e. use --device cpu' # Input gs = int(max(model.stride)) # grid size (max stride) imgsz = [check_img_size(x, gs) for x in imgsz] # verify img_size are gs-multiples im = torch.zeros(batch_size, 3, *imgsz).to(device) # image size(1,3,320,192) BCHW iDetection # Update model model.eval() for k, m in model.named_modules(): m._non_persistent_buffers_set = set() # pytorch 1.6.0 compatibility if isinstance(m, (Detect, LmkDetect)): m.inplace = inplace m.dynamic = dynamic m.export = True for _ in range(2): y = model(im) # dry runs if half and not coreml: im, model = im.half(), model.half() # to FP16 shape = tuple((y[0] if isinstance(y, tuple) else y).shape) # model output shape metadata = {'stride': int(max(model.stride)), 'names': model.names} # model metadata LOGGER.info(f"\n{colorstr('PyTorch:')} starting from {file} with output shape {shape} ({file_size(file):.1f} MB)") # Exports f = [''] * len(fmts) # exported filenames warnings.filterwarnings(action='ignore', category=torch.jit.TracerWarning) # suppress TracerWarning if jit: # TorchScript f[0], _ = export_torchscript(model, im, file, optimize) if engine: # TensorRT required before ONNX f[1], _ = export_engine(model, im, file, half, dynamic, simplify, workspace, verbose) if onnx or xml: # OpenVINO requires ONNX f[2], _ = export_onnx(model, im, file, opset, dynamic, simplify) if xml: # OpenVINO f[3], _ = export_openvino(file, metadata, half) if coreml: # CoreML f[4], _ = export_coreml(model, im, file, int8, half) if any((saved_model, pb, tflite, edgetpu, tfjs)): # TensorFlow formats assert not tflite or not tfjs, 'TFLite and TF.js models must be exported separately, please pass only one type.' assert not isinstance(model, ClassificationModel), 'ClassificationModel export to TF formats not yet supported.' f[5], s_model = export_saved_model(model.cpu(), im, file, dynamic, tf_nms=nms or agnostic_nms or tfjs, agnostic_nms=agnostic_nms or tfjs, topk_per_class=topk_per_class, topk_all=topk_all, iou_thres=iou_thres, conf_thres=conf_thres, keras=keras) if pb or tfjs: # pb prerequisite to tfjs f[6], _ = export_pb(s_model, file) if tflite or edgetpu: f[7], _ = export_tflite(s_model, im, file, int8 or edgetpu, data=data, nms=nms, agnostic_nms=agnostic_nms) if edgetpu: f[8], _ = export_edgetpu(file) if tfjs: f[9], _ = export_tfjs(file) if paddle: # PaddlePaddle f[10], _ = export_paddle(model, im, file, metadata) # Finish f = [str(x) for x in f if x] # filter out '' and None if any(f): cls, det, seg = (isinstance(model, x) for x in (ClassificationModel, DetectionModel, SegmentationModel)) # type dir = Path('segment' if seg else 'classify' if cls else '') h = '--half' if half else '' # --half FP16 inference arg s = "# WARNING ⚠️ ClassificationModel not yet supported for PyTorch Hub AutoShape inference" if cls else \ "# WARNING ⚠️ SegmentationModel not yet supported for PyTorch Hub AutoShape inference" if seg else '' LOGGER.info(f'\nExport complete ({time.time() - t:.1f}s)' f"\nResults saved to {colorstr('bold', file.parent.resolve())}" f"\nDetect: python {dir / ('detect.py' if det else 'predict.py')} --weights {f[-1]} {h}" f"\nValidate: python {dir / 'val.py'} --weights {f[-1]} {h}" f"\nPyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', '{f[-1]}') {s}" f"\nVisualize: https://netron.app") return f # return list of exported files/dirs def parse_opt(): parser = argparse.ArgumentParser() parser.add_argument('--data', type=str, default=ROOT / 'data/widerface.yaml', help='dataset.yaml path') parser.add_argument('--weights', nargs='+', type=str, default=ROOT / 'yolov5n-lmk.pt', help='model.pt path(s)') parser.add_argument('--imgsz', '--img', '--img-size', nargs='+', type=int, default=[640, 640], help='image (h, w)') parser.add_argument('--batch-size', type=int, default=1, help='batch size') parser.add_argument('--device', default='cpu', help='cuda device, i.e. 0 or 0,1,2,3 or cpu') parser.add_argument('--half', action='store_true', help='FP16 half-precision export') parser.add_argument('--inplace', action='store_true', help='set YOLOv5 Detect() inplace=True') parser.add_argument('--keras', action='store_true', help='TF: use Keras') parser.add_argument('--optimize', action='store_true', help='TorchScript: optimize for mobile') parser.add_argument('--int8', action='store_true', help='CoreML/TF INT8 quantization') parser.add_argument('--dynamic', action='store_true', help='ONNX/TF/TensorRT: dynamic axes') parser.add_argument('--simplify', action='store_true', help='ONNX: simplify model') parser.add_argument('--opset', type=int, default=11, help='ONNX: opset version') parser.add_argument('--verbose', action='store_true', help='TensorRT: verbose log') parser.add_argument('--workspace', type=int, default=4, help='TensorRT: workspace size (GB)') parser.add_argument('--nms', action='store_true', help='TF: add NMS to model') parser.add_argument('--agnostic-nms', action='store_true', help='TF: add agnostic NMS to model') parser.add_argument('--topk-per-class', type=int, default=100, help='TF.js NMS: topk per class to keep') parser.add_argument('--topk-all', type=int, default=100, help='TF.js NMS: topk for all classes to keep') parser.add_argument('--iou-thres', type=float, default=0.45, help='TF.js NMS: IoU threshold') parser.add_argument('--conf-thres', type=float, default=0.25, help='TF.js NMS: confidence threshold') parser.add_argument( '--include', nargs='+', default=['onnx'], help='torchscript, onnx, openvino, engine, coreml, saved_model, pb, tflite, edgetpu, tfjs, paddle') opt = parser.parse_args() print_args(vars(opt)) return opt def main(opt): for opt.weights in (opt.weights if isinstance(opt.weights, list) else [opt.weights]): run(**vars(opt)) if __name__ == "__main__": opt = parse_opt() main(opt)添加完以上三个文件后在utils文件夹中添加landmark文件夹

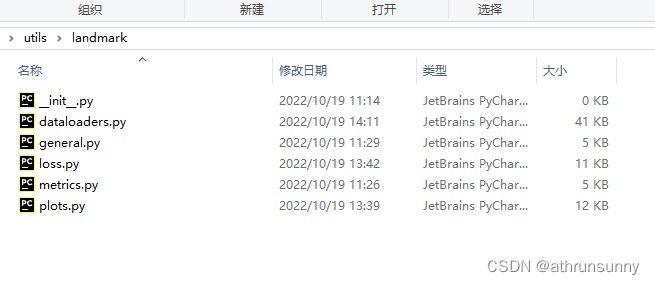

utils/landmark/general.py

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license"""General utils""" import time import torchimport torchvision from ..metrics import box_ioufrom ..general import xywh2xyxy # for face landmarkdef non_max_suppression_face(prediction, conf_thres=0.25, iou_thres=0.45, classes=None, agnostic=False, labels=()): """Performs Non-Maximum Suppression (NMS) on inference results Returns: detections with shape: nx16 (x1, y1, x2, y2, conf, keypoint*10, cls) """ nc = prediction.shape[2] - 15 # number of classes xc = prediction[..., 4] > conf_thres # candidates # Settings min_wh, max_wh = 2, 4096 # (pixels) minimum and maximum box width and height time_limit = 10.0 # seconds to quit after redundant = True # require redundant detections multi_label = nc > 1 # multiple labels per box (adds 0.5ms/img) merge = False # use merge-NMS t = time.time() output = [torch.zeros((0, 16), device=prediction.device)] * prediction.shape[0] for xi, x in enumerate(prediction): # image index, image inference # Apply constraints # x[((x[..., 2:4] < min_wh) | (x[..., 2:4] > max_wh)).any(1), 4] = 0 # width-height x = x[xc[xi]] # confidence # Cat apriori labels if autolabelling if labels and len(labels[xi]): l = labels[xi] v = torch.zeros((len(l), nc + 15), device=x.device) v[:, :4] = l[:, 1:5] # box v[:, 4] = 1.0 # conf v[range(len(l)), l[:, 0].long() + 15] = 1.0 # cls x = torch.cat((x, v), 0) # If none remain process next image if not x.shape[0]: continue # Compute conf x[:, 15:] *= x[:, 4:5] # conf = obj_conf * cls_conf # Box (center x, center y, width, height) to (x1, y1, x2, y2) box = xywh2xyxy(x[:, :4]) # Detections matrix nx6 (xyxy, conf, landmarks, cls) if multi_label: i, j = (x[:, 15:] > conf_thres).nonzero(as_tuple=False).T x = torch.cat((box[i], x[i, j + 15, None], x[i, 5:15], j[:, None].float()), 1) else: # best class only conf, j = x[:, 15:].max(1, keepdim=True) x = torch.cat((box, conf, x[:, 5:15], j.float()), 1)[conf.view(-1) > conf_thres] # Filter by class if classes is not None: x = x[(x[:, 5:6] == torch.tensor(classes, device=x.device)).any(1)] # If none remain process next image n = x.shape[0] # number of boxes if not n: continue # Batched NMS c = x[:, 15:16] * (0 if agnostic else max_wh) # classes boxes, scores = x[:, :4] + c, x[:, 4] # boxes (offset by class), scores i = torchvision.ops.nms(boxes, scores, iou_thres) # NMS # if i.shape[0] > max_det: # limit detections # i = i[:max_det] if merge and (1 < n < 3E3): # Merge NMS (boxes merged using weighted mean) # update boxes as boxes(i,4) = weights(i,n) * boxes(n,4) iou = box_iou(boxes[i], boxes) > iou_thres # iou matrix weights = iou * scores[None] # box weights x[i, :4] = torch.mm(weights, x[:, :4]).float() / weights.sum(1, keepdim=True) # merged boxes if redundant: i = i[iou.sum(1) > 1] # require redundancy output[xi] = x[i] if (time.time() - t) > time_limit: break # time limit exceeded return output def scale_coords_landmarks(img1_shape, coords, img0_shape, ratio_pad=None): # Rescale coords (xyxy) from img1_shape to img0_shape if ratio_pad is None: # calculate from img0_shape gain = min(img1_shape[0] / img0_shape[0], img1_shape[1] / img0_shape[1]) # gain = old / new pad = (img1_shape[1] - img0_shape[1] * gain) / 2, (img1_shape[0] - img0_shape[0] * gain) / 2 # wh padding else: gain = ratio_pad[0][0] pad = ratio_pad[1] coords[:, [0, 2, 4, 6, 8]] -= pad[0] # x padding coords[:, [1, 3, 5, 7, 9]] -= pad[1] # y padding coords[:, :10] /= gain # clip_coords(coords, img0_shape) coords[:, 0].clamp_(0, img0_shape[1]) # x1 coords[:, 1].clamp_(0, img0_shape[0]) # y1 coords[:, 2].clamp_(0, img0_shape[1]) # x2 coords[:, 3].clamp_(0, img0_shape[0]) # y2 coords[:, 4].clamp_(0, img0_shape[1]) # x3 coords[:, 5].clamp_(0, img0_shape[0]) # y3 coords[:, 6].clamp_(0, img0_shape[1]) # x4 coords[:, 7].clamp_(0, img0_shape[0]) # y4 coords[:, 8].clamp_(0, img0_shape[1]) # x5 coords[:, 9].clamp_(0, img0_shape[0]) # y5 return coordsutils/landmark/loss.py